Sparks Financial Recovery

The outage or lockout is usually the last symptom to appear, not the first. Surprise spending, delayed upgrades, and aging infrastructure create weak points that can disrupt managed cybersecurity services and put budget control, resilience, and uptime at risk. Reducing that risk starts with planning upgrades deliberately and aligning IT decisions to business risk.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Financial Offices in Sparks End Up With a Network Crash

A network crash in a financial office is rarely caused by one bad day. In most cases, the real issue is that IT decisions have been handled as isolated purchases instead of part of a financial roadmap. Firewalls stay in place past support dates, switches run without redundancy, line-of-business software grows around old server assumptions, and cybersecurity tools are added without a clear operating plan. By the time users lose access, the business has already been carrying technical debt for months or years.

We see this pattern across Sparks and greater Northern Nevada when leadership is forced into reactive spending. Without a vCIO function, technology becomes a surprise expense rather than a budgeted operating system for the business. That is where managed cybersecurity services in Northern Nevada become operationally important. They are not just about blocking threats; they create visibility into lifecycle risk, unsupported systems, backup gaps, and the hidden dependencies that turn a routine hardware issue into a full office outage. In situations like Jerry’s, the crash is simply the first event leadership cannot ignore.

- Aging network infrastructure: Older switches, firewalls, and wireless controllers often remain in production after warranty or firmware support ends, increasing failure rates and limiting security updates.

- Unplanned capital spending: When replacements are delayed until failure, financial offices lose the ability to schedule downtime, compare options, and control implementation cost.

- Security stack drift: EDR, MFA, email filtering, and backup tools may exist, but without coordinated oversight they do not always align with the actual network design or business workflow.

- Operational concentration risk: A single closet, single ISP handoff, or single authentication dependency can interrupt billing, document access, and advisor communication at once.

How to Remediate the Failure Without Repeating It Next Quarter

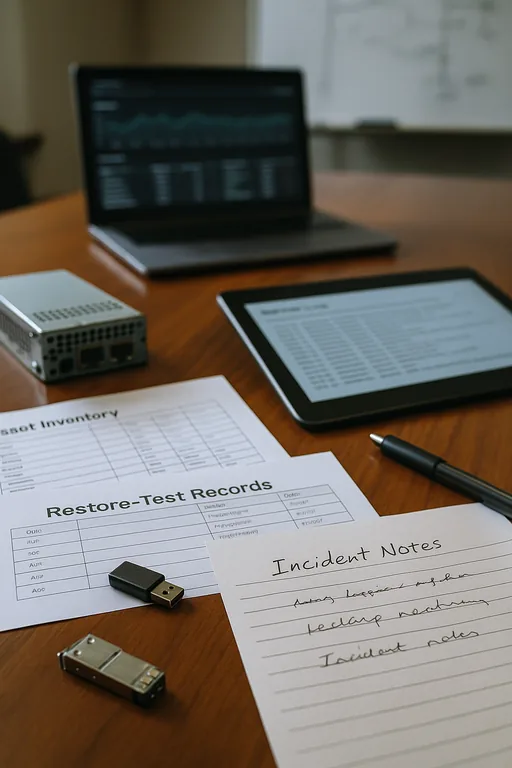

The immediate fix is to restore service, validate data integrity, and confirm that security controls remained intact during the outage. The more important step is to convert the event into a planning model. That means documenting asset age, support status, business criticality, and replacement timing, then tying those findings to a budget calendar. Financial offices that complete structured risk assessments and security readiness reviews usually identify the same weak points early: unsupported edge devices, flat internal networks, untested backups, and no clear recovery sequence for line-of-business systems.

Practical remediation should also follow established guidance from sources such as CISA’s Cybersecurity Performance Goals . For a Sparks financial office, that means reducing single points of failure, validating restore times, hardening identity controls, and assigning ownership for lifecycle decisions. The goal is not to overspend. The goal is to stop treating infrastructure replacement as an emergency purchase after operations have already been disrupted.

- Lifecycle planning: Build a 12- to 36-month replacement schedule for firewalls, switches, wireless gear, and backup appliances so spending is predictable.

- Backup validation: Test file, server, and cloud restores on a schedule and confirm recovery time objectives against actual office workflow.

- Network segmentation: Use VLAN separation for staff devices, guest access, printers, and sensitive systems to reduce blast radius during failures or security events.

- MFA and identity hardening: Protect remote access, admin accounts, and cloud applications with enforced MFA, conditional access, and privileged account review.

- Alerting and documentation: Maintain current diagrams, escalation paths, and device monitoring so response is measured rather than improvised.

Field Evidence: From Surprise Expense to Planned Stability

In one Northern Nevada financial office corridor, the environment started with recurring switch instability, inconsistent wireless coverage, and no documented replacement schedule for core infrastructure. The business had already invested in security tools, but there was no governance process to decide what should be replaced first, what could wait, and what represented a compliance or uptime risk. After the outage review, leadership moved the environment into a documented planning cycle with asset age tracking, backup testing, and policy alignment through compliance-focused IT management .

The before-and-after difference was operational, not cosmetic. Instead of waiting for another failure during a busy reporting period or client review week, the office replaced end-of-life network hardware during a scheduled maintenance window, updated recovery documentation, and aligned security controls to actual business processes. That matters in Northern Nevada, where multi-site coordination between Reno, Sparks, and Carson-area staff can magnify even a short outage if shared systems are not mapped correctly.

- Result: Unplanned network downtime dropped from repeated service interruptions to zero critical outages over the following two quarters, while recovery documentation reduced incident response time by more than 60 percent.

Financial Roadmap Controls for Network Stability

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Managed Cybersecurity Services and has spent his career building practical recovery, security, and operational continuity processes for businesses across Sparks, Reno, and Northern Nevada and Northern Nevada.

Local Support in Sparks, Reno, and Northern Nevada

Reno Computer Services supports financial and professional offices throughout Sparks, Reno, and surrounding Northern Nevada business corridors. For locations near Damonte Ranch, travel and dispatch planning matter because even a short delay can extend downtime when a failed network device affects phones, file access, and cloud authentication at the same time.

Planning Prevents the Next Network Failure

A financial office network crash in Sparks is usually a budgeting and governance problem before it becomes a hardware problem. When infrastructure ages without a replacement plan, security tools drift away from business needs, and recovery procedures are not tested, the business is left reacting under pressure. That is expensive, disruptive, and avoidable.

The practical takeaway is straightforward: treat IT as an operating roadmap, not a series of emergency purchases. When leadership aligns refresh cycles, security controls, and recovery expectations to actual business risk, uptime improves and spending becomes far more predictable.