Reno/Sparks Network Crash

When a business is dealing with a network crash, the failure usually started earlier. Poor safeguards, inconsistent records handling, and a slow response can weaken network server and cloud management over time and leave financial offices in The Truckee Meadows exposed when pressure hits. Addressing the problem means documenting safeguards, tightening response steps, and protecting sensitive data.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why a Network Crash Becomes a Legal Liability Problem

A network crash in a financial office is rarely just a hardware event. In most cases, we find an earlier pattern of weak change control, incomplete backup verification, poor records handling, and no clear incident ownership. Once the system fails, the business is not only dealing with downtime. It is also dealing with whether client files were exposed, whether records were altered, and whether the firm can prove it acted reasonably. In The Truckee Meadows, that matters because regulated financial operations often depend on fast access to client documents, archived communications, and audit trails.

The legal liability issue starts when leadership cannot show what protections were in place before the outage. If client data is lost or inaccessible, saying the office did not know the risk is not a strong position anywhere, and it is certainly not a practical defense in a Reno dispute. That is why stable network server and cloud management in Northern Nevada has to include documented safeguards, access controls, backup integrity checks, and a tested response path. In situations like Austin faced, the visible crash is only the final symptom of a longer operational failure.

- Technical factor: Flat network design, aging switching equipment, inconsistent permissions, and unverified backups often combine to turn a single fault into a broader interruption affecting file access, cloud sync, and records retention.

- Operational factor: Financial staff lose billable time quickly when portfolio files, scanned documents, and shared templates are unavailable, especially during month-end reporting or client review periods.

- Liability factor: If the office cannot demonstrate reasonable safeguards, incident logs, and recovery steps, the outage can expand into a records, privacy, or negligence issue rather than remaining a simple IT event.

Practical Remediation for Financial Offices Under Strain

The fix is not just replacing a failed device. The right remediation starts with isolating the failure domain, validating the condition of backups, reviewing authentication logs, and confirming whether any client data was corrupted or exposed. From there, we typically rebuild around documented recovery priorities: line-of-business applications first, file access second, cloud synchronization third, and reporting validation after systems are stable. For financial offices, that sequence reduces confusion and preserves evidence if a compliance review follows.

Longer term, businesses usually need structured oversight such as managed IT support plans for growing Reno businesses so patching, alerting, backup testing, vendor coordination, and response ownership are not left to chance. Controls should include MFA hardening, segmented VLANs for servers and workstations, immutable or isolated backups, and quarterly recovery testing aligned with guidance from CISA . Where sensitive financial records are involved, endpoint detection and response should also be part of the baseline, not an afterthought.

- Control step: Separate servers, workstations, and guest or unmanaged devices with VLAN segmentation and access policy enforcement so one fault or compromise does not spread across the entire office.

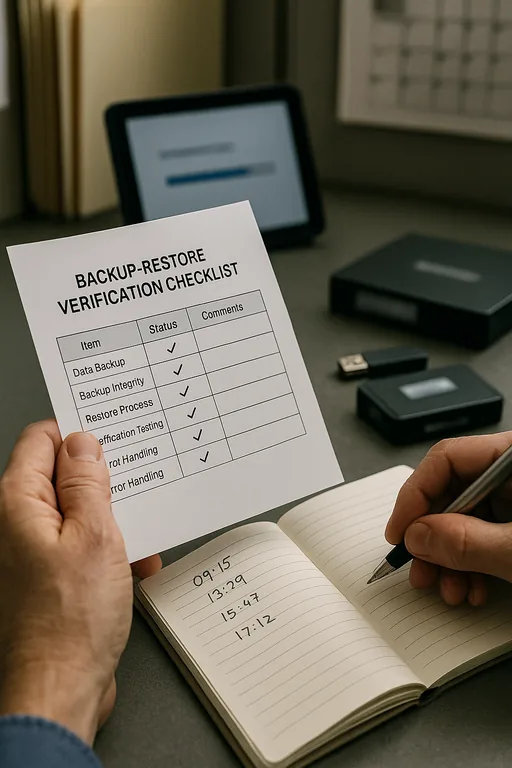

- Control step: Validate backups through actual restore testing, not dashboard status alone, and document recovery time expectations for client records, accounting systems, and shared file repositories.

- Control step: Improve alerting and escalation so internet instability, switch errors, storage warnings, and failed cloud sync events are reviewed before they become a full outage.

Field Evidence: Restoring Stability Before the Next Reporting Deadline

We have seen this pattern in offices spread between Reno, Sparks, and Carson City where a single network fault exposed years of informal IT decisions. In one case, a financial team was operating with mixed on-premise storage, cloud file sync, and no tested recovery runbook. Before remediation, a switch failure and permissions issue left staff unable to access current client folders, and management could not confirm whether the latest records were complete. After segmentation, backup validation, and tighter endpoint oversight supported by cybersecurity services in Washoe County , the office had a documented recovery sequence and clear evidence of what systems were affected.

- Result: Recovery time for core file access dropped from most of a business day to under 75 minutes, backup restore confidence improved through monthly testing, and month-end processing resumed without repeated data integrity questions.

Reference Table: Common Failure Points in Financial Office Networks

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Network Server And Cloud Management and has spent his career building practical recovery, security, and operational continuity processes for businesses across The Truckee Meadows and Northern Nevada.

Local Support in The Truckee Meadows

Financial offices across Reno, Sparks, and nearby business corridors often need support that accounts for both remote response and on-site realities. From our Ryland Street office, the Saddlehorn area is typically about 22 minutes away under normal conditions, which is why documented remote access, monitoring, and recovery procedures matter before a failure occurs. For firms handling sensitive client records, local response planning is part of risk control, not just convenience.

What Financial Offices Should Take Away

A network crash in a Truckee Meadows financial office is usually the result of accumulated control failures, not bad luck. Weak documentation, inconsistent records handling, untested backups, and unclear response ownership create the conditions for both downtime and legal exposure. Once client files, billing records, or archived communications are affected, the business has to answer operational and liability questions at the same time.

The practical response is straightforward: document safeguards, validate recovery, tighten access control, and make sure the office can prove what happened and how it was contained. That approach reduces downtime, supports compliance, and gives leadership a defensible position if the incident is ever reviewed closely.