Reno/Sparks Network Crash

The outage or lockout is usually the last symptom to appear, not the first. Slow devices, ticket backlogs, and repeated workarounds create weak points that can disrupt IT support and help desk and put productivity, response times, and team focus at risk. Reducing that risk starts with stabilizing daily support, reducing repeat issues, and standardizing how IT is handled.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Small Support Problems Turn Into a Network Crash

In most Sparks financial offices, a network crash is not caused by one dramatic event. It usually develops from repeated low-grade issues that never get fully resolved. Slow logins, unstable mapped drives, aging switches, overloaded wireless access points, and inconsistent workstation patching all create drag. That is the operational drain: small daily glitches acting like a tax on every employee until the environment finally fails under normal business load.

We typically find that firms dealing with recurring friction need tighter process discipline as much as technical cleanup. When ticket backlogs grow, staff start creating workarounds, storing files locally, reusing credentials, or delaying updates because they cannot afford more interruption. In a regulated office handling financial records, that pattern increases both downtime risk and audit exposure. This is where structured IT support and help desk in Northern Nevada becomes important, because the goal is not just to close tickets but to remove the repeat causes behind them. In Herbert’s case, the visible outage was simply the point where accumulated instability finally interrupted normal operations.

- Technical factor: A mix of unresolved endpoint issues, inconsistent network hardware performance, and delayed support response can overload shared resources and trigger a broader office-wide failure.

- Operational detail: In financial offices, even a short interruption affects client communication, document access, approvals, and same-day billing workflows.

- Local context: Across Sparks and Reno, we often see multi-suite office environments where older cabling, piecemeal equipment upgrades, and provider handoff issues complicate troubleshooting.

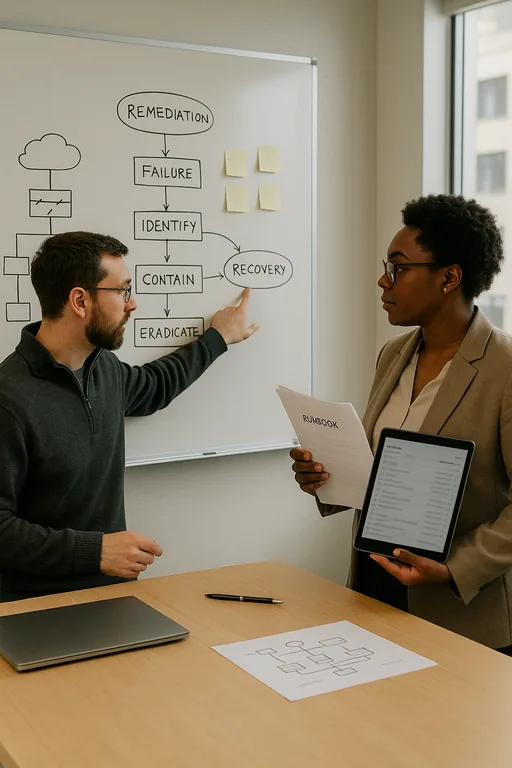

Practical Remediation That Reduces Repeat Failures

The fix is rarely a single hardware replacement. A stable remediation plan starts with identifying what is failing repeatedly, what is overloaded, and what has no monitoring or escalation path. For a financial office, that usually means reviewing switch health, firewall logs, DHCP conflicts, workstation patch status, wireless channel congestion, and the performance of any cloud-synced file repositories. We also verify whether backups are usable and whether key systems can be restored without extended manual rebuilding.

Once the environment is stabilized, the next step is to standardize support. Businesses that want fewer recurring incidents usually benefit from managed IT support plans for growing Reno businesses that include proactive monitoring, lifecycle planning, and documented response procedures. Security controls should be tightened at the same time, especially where staff rely on email attachments, remote access, or shared financial data. The cybersecurity services in Washoe County conversation belongs here because unstable environments are easier to exploit. For practical guidance on resilience and recovery planning, the CISA ransomware and resilience guidance is a useful benchmark even when the incident is operational rather than malicious.

- Control step: Standardize patching, alerting, and ticket triage so repeat issues are identified before they become outages.

- Practical action: Replace failing network components, validate backups, segment critical systems where appropriate, and document escalation paths for application, ISP, and internal infrastructure issues.

- Control step: Review endpoint and identity protections.

- Practical action: Enforce MFA, deploy EDR, and remove informal workarounds that bypass normal support and security controls.

Field Evidence: Restoring Stability in a Busy Office Corridor

We worked through a similar pattern for a professional office environment serving clients across the Reno-Sparks corridor, where staff had normalized slow systems for months before a shared resource failure disrupted the entire day. Before remediation, the office was seeing repeated login delays, intermittent printer and file-share disconnects, and support requests that stayed open too long because each symptom looked minor on its own.

After replacing a failing switch, cleaning up DHCP conflicts, standardizing workstation updates, and tightening escalation rules, the office moved from reactive firefighting to predictable support. The difference was not just technical. Staff stopped building side processes around unstable systems, and supervisors regained visibility into what was actually broken versus what was simply being tolerated.

- Result: Repeat network-related tickets dropped by 62 percent over the next 60 days, and same-day staff interruptions were reduced enough to restore normal client scheduling and billing flow.

Reference Table: Common Failure Points Behind Operational Drain

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in It Support And Help Desk and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno, Sparks, Carson City, Lake Tahoe, and Northern Nevada and Northern Nevada.

Local Support in Reno, Sparks, Carson City, Lake Tahoe, and Northern Nevada

From our Reno office, we regularly support businesses throughout Sparks and the surrounding corridor where fast response, clear escalation, and practical remediation matter more than temporary workarounds. For offices near Longley Lane and other busy commercial areas, travel time, building layout, provider handoffs, and multi-suite network design can all affect how quickly an issue is isolated and resolved. That is why local context matters when a support problem starts turning into a broader operational drain.

Stabilize the Daily Friction Before It Becomes Downtime

A network crash in a Sparks financial office is usually the end result of unresolved support friction, not an isolated surprise. When slow devices, repeat tickets, and informal workarounds are allowed to continue, the environment becomes harder to support and easier to disrupt. That affects response times, staff focus, client communication, and billing flow long before anyone labels it an outage.

The practical takeaway is straightforward: reduce repeat issues, standardize support, and treat recurring slowness as an operational warning sign. Businesses that do that consistently spend less time recovering from preventable failures and more time keeping core work moving.