Reno/Sparks Network Crash

The outage or lockout is usually the last symptom to appear, not the first. Poor safeguards, inconsistent records handling, and a slow response create weak points that can disrupt governance policy and audit preparation and put legal exposure, reporting obligations, and client trust at risk. Reducing that risk starts with documenting safeguards, tightening response steps, and protecting sensitive data.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why a Network Crash Becomes a Legal Liability Problem

For a financial office in Sparks, the network crash is rarely the full story. The real failure usually sits underneath it: weak access controls, incomplete records handling, undocumented exceptions, and no clear chain of response when systems stop behaving normally. That is where legal liability starts to form. If client data is unavailable, altered, or exposed, saying the team did not know what was happening does not carry much weight. In a Reno-area dispute, missing logs, inconsistent retention practices, and unclear approvals can quickly become part of the problem.

We typically see this most clearly in firms that have grown faster than their controls. Shared folders expand, permissions are granted informally, backups exist but are not tested against actual recovery needs, and nobody owns the governance side of the environment. When that happens, a crash can interrupt reporting, delay reconciliations, and create uncertainty around whether regulated or confidential records were handled properly. That is why governance policy and audit preparation in Northern Nevada matters before an outage, not after it. In situations like the one Adrian faced, the immediate downtime is visible, but the larger exposure comes from not being able to prove that safeguards were in place.

- Access control drift: User rights often expand over time without review, creating unnecessary exposure to sensitive financial records.

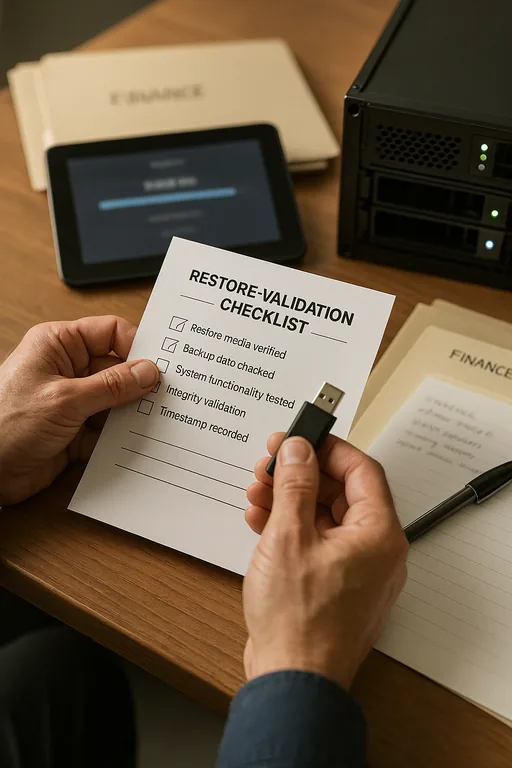

- Unverified recovery processes: Backups may exist, but if restore order, file integrity, and ownership are not tested, recovery can still fail under pressure.

- Incomplete audit trails: Missing logs and inconsistent record retention make it difficult to demonstrate due care after an incident.

- Slow incident escalation: Delayed technical response increases downtime and weakens the organization’s ability to document what happened accurately.

Practical Remediation for Financial Offices in Sparks

The fix is not just to reboot equipment or replace a failed switch. The environment needs operational structure. We start by identifying where sensitive data lives, who should have access, how file activity is logged, and what the recovery sequence looks like when a server, firewall, or line-of-business application fails. For firms dealing with audit pressure or legal exposure, that work usually includes permission cleanup, backup validation, MFA hardening, endpoint detection, and written response steps that management can actually follow.

It also helps to review the business side of the environment through technology advisory and assessment for Sparks operations so technical controls line up with reporting obligations and records policy. A practical benchmark for incident planning and protective controls can be found in the CISA guidance for small businesses , which is useful even for established offices that have outgrown informal IT habits.

- Permission review: Remove inherited or outdated access to client folders, finance shares, and archived records.

- Backup validation: Test restores at the file, server, and application level so recovery is measured, not assumed.

- MFA and endpoint controls: Reduce the chance that a simple credential issue becomes a broader access event.

- Incident documentation: Define who escalates, who approves containment, and how evidence is preserved for audit and legal review.

Field Evidence: Multi-Office Reporting Recovery in the Sparks Corridor

We worked through a similar pattern with a professional office supporting clients across Sparks and Reno where reporting staff depended on a central file share and a legacy line-of-business application. Before remediation, permissions had been layered over several years, backup jobs were completing without meaningful restore testing, and no one could clearly explain the order of operations during an outage. A single network interruption created hours of confusion because teams did not know whether the issue was connectivity, file corruption, or account lockout.

After cleanup, the office had segmented access by role, documented restore priorities, and aligned leadership around a formal response path. That made a measurable difference during the next service disruption caused by a carrier-side issue affecting a South McCarran corridor building. Instead of broad downtime and uncertain records handling, the team isolated the problem quickly, restored access in sequence, and used IT systems planning for regulated business operations to keep governance and reporting requirements tied to actual infrastructure decisions.

- Result: Recovery time dropped from roughly 3.5 hours of broad interruption to under 50 minutes for priority systems, with documented access review and validated restore evidence available for management.

Financial Office Risk and Control Reference

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Governance Policy And Audit Preparation and has spent his career building practical recovery, security, and operational continuity processes for businesses across Sparks, Reno, and Northern Nevada and Northern Nevada.

Local Support in Sparks, Reno, and Northern Nevada

Financial offices in Sparks often need support that is close enough to respond quickly but structured enough to address governance, audit preparation, and legal exposure at the same time. From our Reno location, the route to the Q&D Construction Main Yard area is typically about 10 minutes, which matters when a file access failure or network outage is affecting reporting deadlines, records handling, or client communications.

Operational Takeaway for Financial Offices

A network crash in a financial office is not just an uptime issue. In Sparks, it can quickly become a records, audit, and legal liability problem when access rights are loose, recovery steps are untested, and incident documentation is incomplete. The organizations that handle these events best are usually the ones that already know where sensitive data lives, who can reach it, and how to prove that controls were followed.

The practical path forward is straightforward: tighten permissions, validate backups, preserve logs, and align technical response with governance policy. That reduces downtime, but more importantly, it gives leadership a defensible operating position when clients, auditors, or counsel start asking hard questions.