Reno/Sparks Lockout

The outage or lockout is usually the last symptom to appear, not the first. Unclear ownership, overlapping tools, and fragmented support create weak points that can disrupt regulatory compliance support and put response time, accountability, and outage recovery at risk. Reducing that risk starts with clarifying ownership and enforcing cleaner escalation paths.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Vendor Chaos Turns a Lockout Into a Compliance Problem

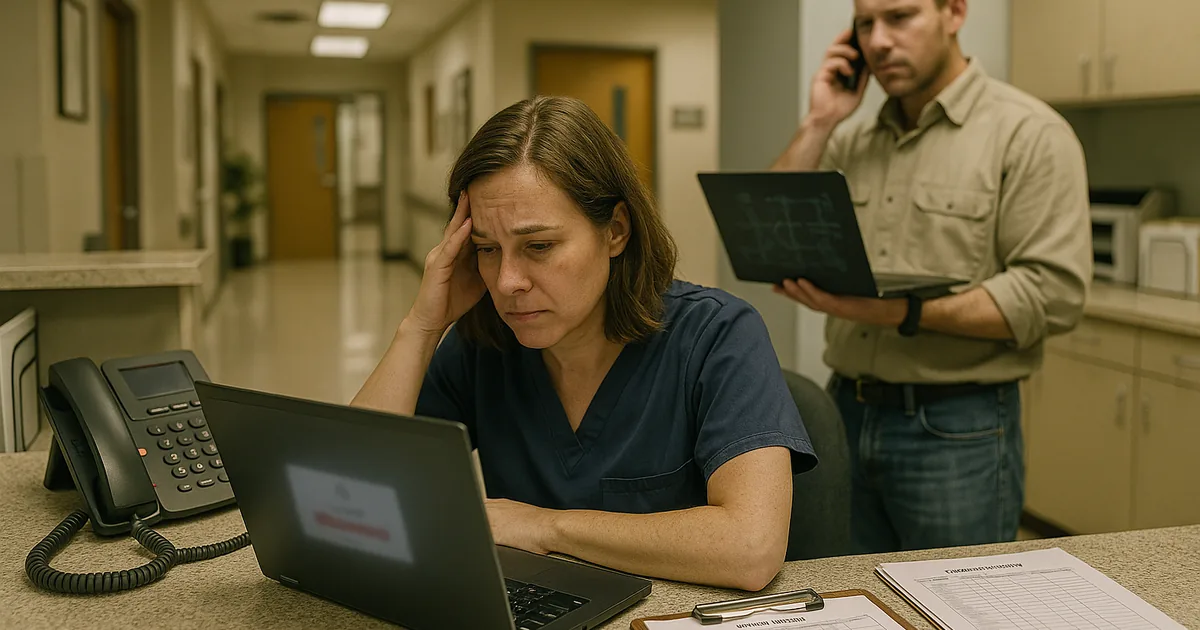

A medical practice in Sparks usually does not get locked out because of one dramatic failure. More often, the lockout happens after months of unclear ownership between the internet carrier, line-of-business software vendor, phone provider, copier support, workstation support, and whoever is supposed to manage Microsoft 365 identities. The immediate symptom is loss of access, but the underlying issue is that no one has authority to coordinate the full stack.

We see this most often when an office manager is forced to act as the unofficial escalation point for every provider. That works until a password sync breaks, a workstation policy conflicts with the EHR login process, or a scanner update interrupts document flow into patient records. At that point, regulatory obligations do not pause. Access logging, retention, secure transmission, and user accountability still matter, which is why practices often need structured regulatory compliance support in Northern Nevada rather than disconnected vendor tickets.

Problems like this rarely stay isolated. They tend to erode regulatory compliance support through unclear ownership, overlapping tools, and fragmented support and create avoidable risk when systems are under strain. In a Sparks medical office, that can mean delayed charting, slower intake, missed authorizations, and confusion over whether the issue belongs to the ISP, the cloud application vendor, or the local network. When Connor could not get a straight answer on who owned authentication, the outage lasted longer than the technical fault itself.

- Technical factor: Identity, network, endpoint, and vendor responsibilities were split across multiple parties with no single escalation owner, which delayed root-cause isolation and extended downtime.

- Operational factor: Front-desk staff, billers, and clinicians depended on the same access chain, so one unresolved lockout disrupted scheduling, documentation, and revenue flow at the same time.

- Compliance factor: When support is fragmented, audit trails, access control decisions, and incident documentation are often incomplete, creating exposure beyond the outage itself.

How to Fix the Ownership Gap Before the Next Outage

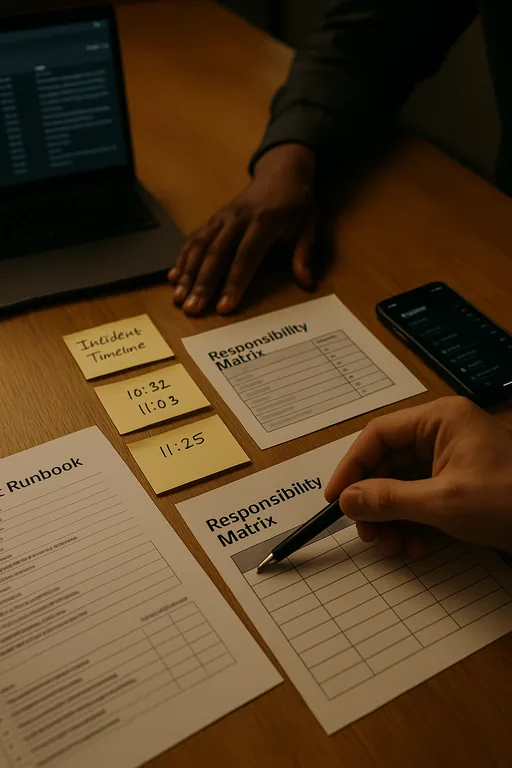

The practical fix is not adding more vendors. It is assigning operational ownership across the environment and documenting who controls authentication, endpoint policy, internet failover, voice systems, EHR integrations, backup validation, and after-hours escalation. For medical practices, that usually means consolidating oversight into a single operating model with defined runbooks, vendor contacts, and response thresholds. A structured approach such as IT operations management for multi-vendor environments gives the practice one accountable path for triage instead of five parallel conversations.

From a technical standpoint, we typically start by mapping dependencies: internet circuit, firewall, DNS, identity provider, endpoint security, EHR access method, scanning workflow, and backup status. Then we remove overlap. If two tools are enforcing conflicting policies, one has to go. If MFA is inconsistently applied, it gets standardized. If backups exist but restores are untested, they are not treated as reliable. For healthcare-related operations, the CISA ransomware and resilience guidance is useful because it aligns technical controls with response discipline rather than product marketing.

- Control step: Establish a single escalation owner with authority to coordinate all vendors, approve changes, and document incident timelines.

- Practical action: Standardize MFA, validate backup recovery, inventory all admin accounts, and maintain a current responsibility matrix for internet, phones, cloud apps, endpoints, and compliance controls.

- Control step: Separate critical clinical and administrative workflows where possible.

- Practical action: Use network segmentation, tested failover paths, and documented fallback procedures so one vendor issue does not stop the entire office.

Field Evidence: Restoring Order After a Multi-Vendor Access Failure

In one Northern Nevada support case, a healthcare-adjacent office operating between Sparks and Reno had recurring login failures tied to a mix of ISP changes, stale admin credentials, and undocumented workstation policies. Before remediation, every outage triggered a chain of finger-pointing between the software vendor, local device support, and the internet provider. The office had no current escalation map, no tested recovery sequence, and no confidence that after-hours incidents would be handled consistently.

After consolidating ownership, documenting vendor boundaries, and aligning endpoint, identity, and network controls, the office moved from reactive ticket chasing to a stable support model. They also adopted managed IT support in Reno to keep monitoring, patching, and escalation under one operational process. That mattered during winter weather and carrier instability, when remote access and voice reliability are often stressed across the region.

- Result: Access-related incidents dropped from repeated monthly disruptions to one minor event in the following quarter, and average recovery time fell from several hours to under 35 minutes.

Reference Table: Where Medical Practice Lockouts Usually Start

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Regulatory Compliance Support and has spent his career building practical recovery, security, and operational continuity processes for businesses across Sparks, Reno, and Northern Nevada and Northern Nevada.

Local Support in Sparks, Reno, and Northern Nevada

Medical practices in Sparks often depend on systems and vendors spread across Reno, the North Valleys, and remote cloud platforms. That distance matters when a lockout affects intake, billing, or records access. From our office in Reno, the route to the North Valleys support destination is typically about 25 minutes, which is why clear escalation ownership, remote access readiness, and documented vendor coordination are critical before an incident starts.

Clear Ownership Prevents the Next Lockout

The real issue behind many medical practice lockouts in Sparks is not just technology failure. It is operational ambiguity. When internet, phones, software, endpoints, and compliance responsibilities are split across too many parties, response slows down and accountability disappears at the exact moment the practice needs both.

Reducing that risk means defining ownership before the next outage, validating recovery steps, and making sure one accountable team can coordinate every vendor involved. That approach shortens downtime, protects documentation workflows, and supports the compliance expectations medical offices still have to meet during an incident.