Reno/Sparks IT Gap

The outage or lockout is usually the last symptom to appear, not the first. Phishing clicks, password reuse, and weak account hygiene create weak points that can disrupt managed cybersecurity programs and put account security, access stability, and business continuity at risk. Reducing that risk starts with tightening identity controls and building safer day-to-day habits.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

How Human Error Turns Into a Network Crash

In financial offices, a network crash is often not a pure hardware event. More often, we find an identity problem that spreads into a systems problem. A user clicks a fake reset link, reuses a password that was already exposed elsewhere, or approves a login prompt without verifying it. Once that happens, attackers or automated abuse tools can trigger lockouts, overload authentication requests, tamper with mailbox rules, or create enough account instability that staff interpret the event as a network failure.

That is the human element gap. The software stack may be patched, the firewall may be current, and backups may exist, but weak user security habits still create a practical opening. In Sparks and across the Reno-Sparks corridor, financial teams depend on stable access to line-of-business applications, cloud file platforms, email, and secure client communications. When identity controls are weak, even a single phishing event can interrupt operations and undermine otherwise solid managed cybersecurity programs in Northern Nevada . That is why the outage is usually the last symptom, not the first. As with Daniela’s incident, the visible crash often starts with a small decision made under time pressure.

- Technical Factor: Compromised or repeatedly locked user accounts can trigger authentication failures, session instability, mailbox abuse, and access interruptions that look like a broader network outage to end users.

- Operational Factor: Financial offices rely on continuous access to client records, secure file exchange, and billing workflows, so even short identity-related disruptions quickly affect revenue and service delivery.

- Human Factor: Fake password reset emails, MFA fatigue prompts, and password reuse remain common entry points because they target routine staff behavior rather than technical vulnerabilities.

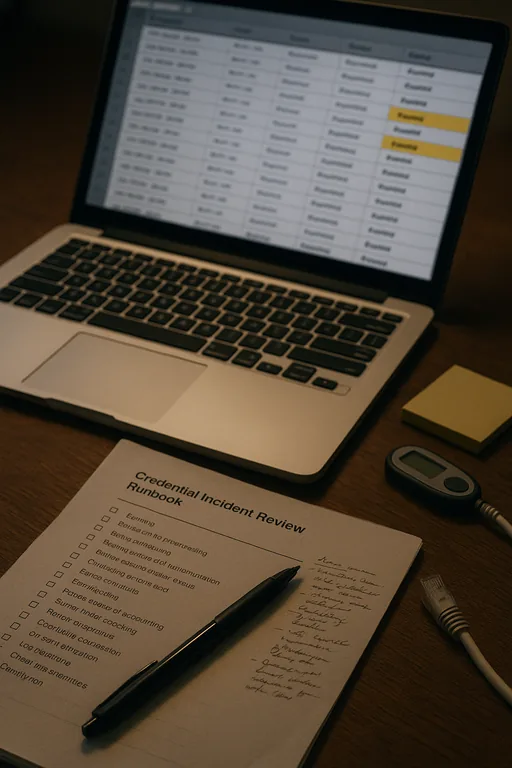

Practical Remediation for Identity-Driven Outages

The fix starts with separating symptoms from root cause. If users are locked out, email rules have changed, or cloud sessions are failing, the first step is to review sign-in logs, conditional access events, endpoint alerts, and recent credential changes. We typically reset exposed credentials, revoke active sessions, confirm MFA enrollment integrity, and isolate any affected endpoints before restoring normal access. Where cloud and on-premise systems overlap, stable recovery also depends on coordinated network, server, and cloud management so authentication, file access, and application dependencies are validated together rather than one at a time.

Longer term, the control set needs to be practical enough for daily use. That means phishing-resistant MFA where possible, password manager adoption, conditional access policies, alerting for impossible travel and suspicious sign-ins, and regular user training tied to real workflows. For financial offices handling sensitive records, the guidance from CISA on strong passwords and account protection is a useful baseline, but the real improvement comes from enforcing those controls consistently across staff, devices, and remote access paths.

- Control Step: Require MFA hardening, disable legacy authentication, and enforce unique credentials with monitored sign-in alerts so suspicious access attempts are contained before they cascade into downtime.

- Control Step: Validate endpoint health and revoke risky sessions during recovery so restored access does not reintroduce the same compromise path.

- Control Step: Run recurring phishing simulations and short policy refreshers so staff can identify fake reset links and approval prompts during normal work pressure.

Field Evidence: Credential Abuse That Looked Like a Core Network Failure

We have seen this pattern in offices operating between Sparks, Reno, and Carson City where staff depend on cloud applications, VoIP, and shared document platforms throughout the day. Before remediation, the environment showed repeated login failures, intermittent file access, and staff assuming the ISP or firewall was at fault. After reviewing authentication logs and endpoint activity, the actual issue traced back to a phishing-triggered credential event combined with weak password reuse and incomplete session controls.

Once identity protections were tightened and supporting systems were reviewed through network infrastructure management for multi-location operations , the office moved from reactive lockout recovery to controlled access restoration. That included better segmentation of critical systems, cleaner alerting, and faster isolation when unusual sign-in activity appeared. In Northern Nevada, where offices often coordinate across satellite locations and mixed internet carriers, that operational discipline matters as much as the security toolset itself.

- Result: Repeated account lockouts dropped by more than 80 percent over the following quarter, and recovery time for access-related incidents was reduced from several hours to under 45 minutes.

Reference Points for Reducing Human-Driven Security Failures

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Managed Cybersecurity Programs and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno, Sparks, Carson City, and Northern Nevada and Northern Nevada.

Local Support in Reno, Sparks, Carson City, and Northern Nevada

Reno Computer Services supports organizations throughout the Reno-Sparks area, including offices that need fast coordination between downtown Reno, Sparks, and the north valleys. The route shown below reflects the local service reality tied to this scenario, including support travel toward the Dandini area near TMCC. For financial offices, response planning is not just about distance. It is about restoring secure access, validating account integrity, and getting staff back into core systems without creating a second incident during recovery.

Why the Human Element Has to Be Treated as Core Infrastructure

When a financial office in Sparks experiences a crash, lockout, or access failure, the visible outage is often only the final stage of the problem. Weak password practices, phishing clicks, and inconsistent account controls can destabilize email, cloud access, file systems, and daily operations long before anyone calls it a security incident. That is why identity protection has to be treated as part of business continuity, not just user training.

The practical takeaway is straightforward: reduce credential risk, monitor sign-in behavior, validate recovery steps carefully, and make sure staff habits support the security stack already in place. Businesses that do that consistently see fewer avoidable outages, faster recovery, and less disruption to billing, client service, and internal operations.