Reno/Sparks IT Audit

When a business is dealing with a network crash, the failure usually started earlier. Slow devices, ticket backlogs, and repeated workarounds can weaken security monitoring and response over time and leave financial offices in The Truckee Meadows exposed when pressure hits. Addressing the problem means stabilizing daily support, reducing repeat issues, and standardizing how IT is handled.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Network Crashes in Financial Offices Usually Start as Operational Drain

A network crash in a financial office rarely begins with one dramatic event. In most cases, it starts with small recurring failures: aging endpoints that take too long to authenticate, unresolved tickets that pile up, printers and scanners that intermittently disconnect, and staff creating manual workarounds just to keep client work moving. In The Truckee Meadows, we often see these issues masked as normal office friction until a busy period exposes how fragile the environment has become.

The real problem is that daily IT friction weakens both resilience and visibility. If support is reactive, patching slips, endpoint alerts get ignored, and network performance issues are treated as isolated complaints instead of symptoms. That is where security monitoring and response in Northern Nevada becomes operationally important, not just a security checkbox. In a financial setting, slow systems can interfere with document access, client communications, reconciliation work, and audit readiness. When Lily’s office hit its outage window, the network failure was only the final stage of a longer pattern of unmanaged drag.

- Ticket backlog: Repeated unresolved issues hide root causes and normalize instability until a switch, firewall, endpoint, or authentication dependency fails under load.

- Slow endpoints: Delayed logins, stalled updates, and poor device health reduce staff throughput and can interrupt security tooling.

- Workarounds: Shared passwords, local file copies, and side-channel communication create both operational inconsistency and audit exposure.

- Monitoring gaps: If alerts are noisy or incomplete, early warning signs around bandwidth saturation, failed backups, or endpoint health are missed.

How to Stabilize the Environment Before the Next Outage

The fix is not a single hardware replacement. It starts with reducing repeat issues, standardizing support, and restoring control over the environment. For financial offices, we typically begin by reviewing endpoint health, switch and firewall logs, authentication performance, patch status, backup success rates, and the age of critical network gear. That creates a baseline for what is actually failing versus what staff have simply learned to tolerate.

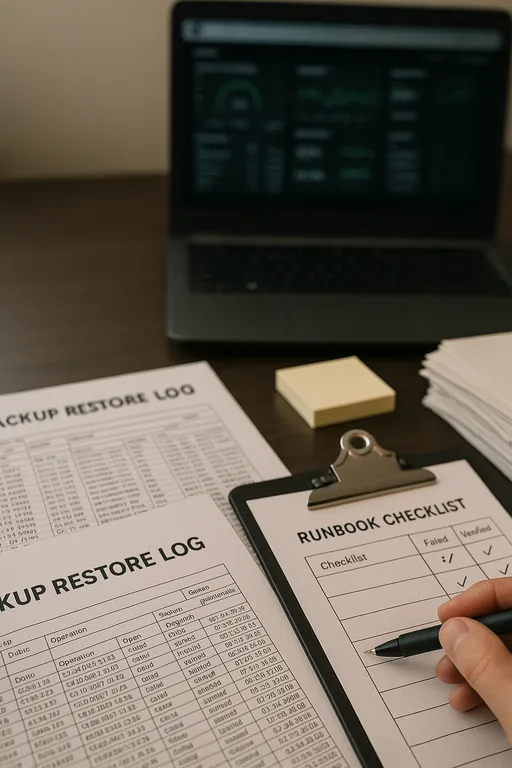

From there, remediation should be tied to business risk. That includes documented escalation paths, tested backup recovery, alert tuning, and access controls that support both uptime and audit requirements. Offices that handle regulated financial data often also need compliance-focused IT management so operational fixes align with retention, access, and reporting obligations. A useful external reference is the CISA ransomware and resilience guidance , especially for backup validation, incident planning, and recovery discipline.

- Network baseline review: Check switch errors, firewall throughput, DHCP and DNS health, and uplink stability to identify where congestion or failure is starting.

- Endpoint control: Standardize patching, remove unsupported devices, and verify EDR coverage so slow machines do not become blind spots.

- Backup validation: Test restores, confirm backup windows complete, and verify that critical financial data is recoverable within acceptable timeframes.

- MFA and access hardening: Reduce risky workarounds by tightening identity controls while keeping staff access predictable.

- Alerting improvements: Tune monitoring so recurring faults are escalated early instead of buried in low-value noise.

Field Evidence: Recovering from a Slow-Burn Network Failure

We recently assessed a Reno-area office corridor environment where staff had been dealing with intermittent slowness for months. The symptoms looked minor at first: delayed file opens, dropped sessions to a hosted application, and recurring complaints tied to one wing of the office. After review, the actual issue was a combination of aging switching hardware, inconsistent endpoint patching, and no clear threshold for escalating recurring tickets. During peak activity, the office effectively ran out of tolerance.

After replacing the unstable network segment, cleaning up endpoint health, validating backups, and documenting escalation rules, the office moved from repeated interruptions to a far more predictable support model. As part of that process, leadership also used risk assessments and security readiness planning to identify where operational friction was creating hidden business exposure across users, devices, and shared systems.

- Result: Repeat network-related tickets dropped by 62 percent over the next 90 days, backup success rates stabilized, and staff regained consistent access during high-volume reporting periods.

Operational Reference Points for Financial Office Network Stability

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Security Monitoring And Response and has spent his career building practical recovery, security, and operational continuity processes for businesses across The Truckee Meadows and Northern Nevada.

Local Support in The Truckee Meadows

Reno Computer Services supports financial offices across Reno, Sparks, and nearby business corridors where recurring IT friction can quietly turn into a larger outage. From our Ryland Street location, the Kings Row area is typically about 10 minutes away, which reflects the practical local support footprint for offices that need faster stabilization, clearer escalation, and more consistent handling of daily technology issues.

Operational Takeaway for Financial Offices in The Truckee Meadows

A network crash is often the visible result of a longer pattern of unmanaged friction. Slow devices, unresolved tickets, inconsistent support practices, and weak escalation standards all reduce the margin for error. In a financial office, that affects more than convenience. It disrupts billing, reporting, client communication, and the ability to maintain a controlled operating environment.

The practical response is to treat recurring IT issues as operational signals, not background noise. Once support is standardized, monitoring is tuned, backups are tested, and infrastructure weak points are addressed, the business is in a much better position to avoid avoidable downtime and respond cleanly when pressure rises.