Reno/Sparks Hub Risk

This kind of issue rarely appears all at once. For logistics hubs in Northern Nevada, it usually builds through slow devices, ticket backlogs, and repeated workarounds and then surfaces as operations stopping, slower recovery, or higher exposure. A more reliable setup starts with stabilizing daily support, reducing repeat issues, and standardizing how IT is handled.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Operational Drain Stops Logistics Work

The main question here is straightforward: why do logistics operations suddenly stop when there was no obvious major outage the day before? In most cases, it is not one dramatic failure. It is the operational drain created by unresolved small issues: aging endpoints, inconsistent patching, overloaded shared systems, weak ticket triage, and staff relying on repeated workarounds. For logistics hubs in Reno, Sparks, and the broader Northern Nevada corridor, those small failures compound quickly because shipping, receiving, inventory movement, and billing all depend on fast, predictable system response.

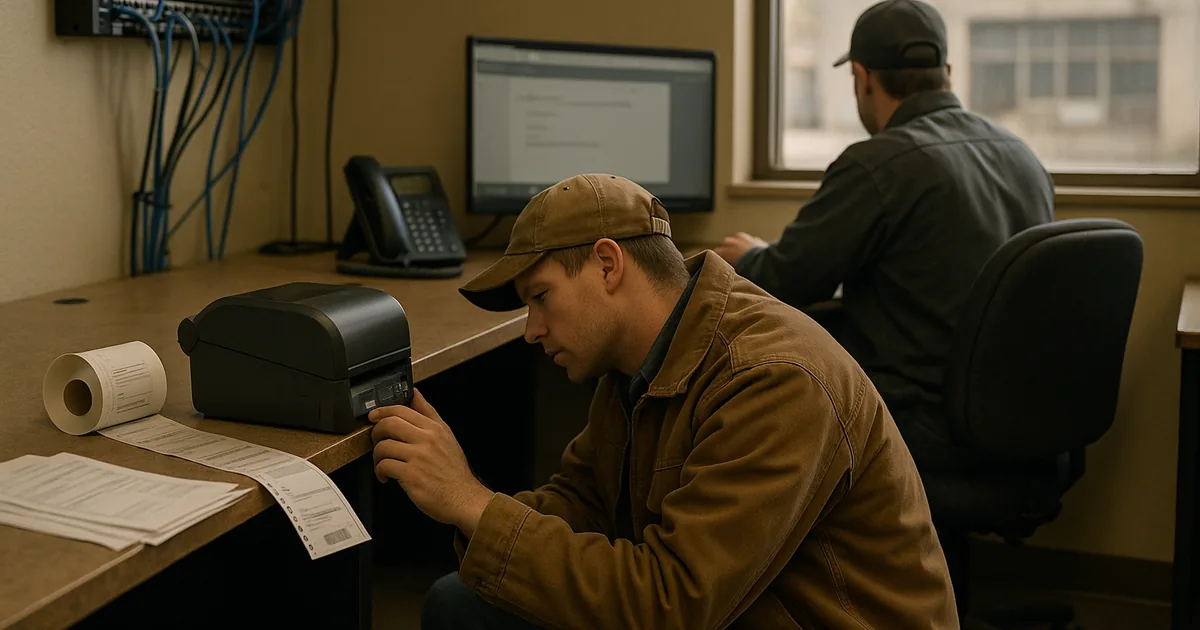

We typically find that once teams start saying a device is “always a little slow” or a printer “usually needs to be retried,” the business is already paying for the problem. That cost shows up as missed scan events, delayed dock updates, duplicate entry, and supervisors spending time chasing status instead of managing flow. This is where structured business IT operations management in Northern Nevada matters. It reduces the backlog of recurring issues before they affect dispatch timing, warehouse coordination, or customer communication. In situations like Jace’s, the visible outage is only the final symptom of a longer pattern.

- Endpoint sprawl: Mixed device age, inconsistent updates, and unmanaged local settings create slow logons, application hangs, and unreliable peripheral behavior.

- Ticket backlog: Repeated low-grade issues stay open too long, so staff normalize broken processes instead of restoring stable operations.

- Shared resource dependency: File shares, print queues, and line-of-business apps become bottlenecks when no one is measuring performance or ownership clearly.

- User workaround risk: Manual spreadsheets, shared passwords, and side-channel communication increase error rates when systems are under strain.

How to Stabilize Daily Support and Reduce Repeat Failures

The fix is usually less about a single tool and more about restoring operational discipline. Start by identifying the systems that directly affect throughput: dispatch workstations, warehouse printers, shared folders, identity services, line-of-business applications, and internet fail points. Then measure where delays actually occur. Once that is visible, standardize endpoint health, tighten escalation rules, and remove the repeat issues that consume staff time every week.

For many logistics environments, that means combining proactive monitoring with stronger endpoint and threat protection for logistics operations , because unstable devices and security gaps often overlap. A workstation that is underpatched, overloaded, or running unauthorized software is both a productivity problem and a security problem. We also recommend aligning controls with practical guidance from CISA’s ransomware and resilience guidance , especially for organizations that depend on uninterrupted access to scheduling, inventory, and billing systems.

- Endpoint standardization: Bring workstations onto a consistent hardware, patching, and software baseline so performance issues can be identified quickly.

- Alerting improvements: Monitor disk health, login failures, print queue errors, and application latency before users report them.

- Identity hardening: Remove shared credentials, enforce MFA, and tighten access paths for dispatch, finance, and operations staff.

- Backup validation: Test file and system recovery regularly so a stalled operation does not turn into a prolonged outage.

Field Evidence: From Daily Friction to Stable Throughput

We worked with a Northern Nevada operation serving regional freight movement between Reno and Carson City where the reported issue was “random slowness.” In practice, the environment had recurring login delays, intermittent scan station failures, and a growing list of unresolved support tickets. Morning receiving windows were being compressed because staff spent the first part of the day reconnecting printers, reopening applications, and confirming whether shared files had updated correctly.

After standardizing endpoints, cleaning up permissions, tightening queue monitoring, and improving user-side controls with identity and email security for business systems , the environment became more predictable. That mattered operationally because the site could handle early shipment volume without supervisors stepping in to manually route around IT issues. In warehouse and logistics settings near older commercial buildings in Reno, we often see a mix of legacy cabling, piecemeal device additions, and inconsistent support ownership creating exactly this kind of drag.

- Result: Recurring support tickets dropped by 43 percent over 90 days, morning startup delays were reduced by roughly 75 minutes per day, and billing handoff errors declined after access and device performance were standardized.

Operational Risk Controls for Logistics IT Environments

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Business It Operations Management and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno, Sparks, Carson City, Lake Tahoe, and Northern Nevada and Northern Nevada.

Local Support in Reno and Northern Nevada

Logistics and distribution teams in Reno often need support that understands both the technical issue and the operational timing behind it. From our Ryland Street office, the South Virginia Street corridor is only a short drive, which helps when a problem affects dispatch, receiving, or billing and needs direct coordination. That local proximity matters, but the larger value is having repeatable support processes that reduce the daily friction that causes operations to stall.

Operational Stability Comes From Fixing the Small Failures Early

The operational drain risk in logistics is rarely a mystery. It usually starts with small recurring issues that are tolerated too long, then expands into slower throughput, delayed billing, and avoidable downtime. For Northern Nevada logistics hubs, the answer is not a patchwork of workarounds. It is consistent support, clear ownership, and systems that are measured before they fail under load.

When daily IT friction is reduced, teams move freight, process updates, and close out work with fewer interruptions. That is the practical goal: fewer repeat tickets, faster recovery, and less dependence on manual fixes when operations are already under pressure.