Reno/Sparks Data Breach

The outage or lockout is usually the last symptom to appear, not the first. Slow devices, ticket backlogs, and repeated workarounds create weak points that can disrupt governance policy and audit preparation and put productivity, response times, and team focus at risk. Reducing that risk starts with stabilizing daily support, reducing repeat issues, and standardizing how IT is handled.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Operational Drain Turns Into Breach Exposure

For construction firms in Sparks, a data breach often starts as ordinary friction rather than a dramatic event. Slow estimating workstations, stale permissions, overloaded shared folders, and ticket queues that never fully clear create a pattern of exceptions. Staff begin storing files locally, forwarding documents through personal inboxes, or reusing credentials because the approved process is too slow. That is the operational drain: small daily glitches acting like death by a thousand cuts until governance policy and audit preparation begin to slip.

We typically find that the breach risk is not just technical. It is procedural. When support is inconsistent, patching falls behind, access reviews stop happening, and logs are not checked with enough discipline to catch early signs of misuse. That is why firms trying to tighten controls around vendor records, payroll data, project financials, and contract documentation often need structured governance policy and audit preparation in Northern Nevada before the next lockout or disclosure event. In cases like Easton’s, the visible problem was account access, but the real issue was unmanaged operational friction that had already normalized unsafe workarounds.

- Technical factor: Repeated support delays lead to unpatched endpoints, inconsistent identity controls, and undocumented exceptions that increase the chance of credential theft, unauthorized file access, and audit gaps.

- Operational factor: Construction teams depend on fast access to plans, change orders, billing records, and vendor communications; when systems lag, staff bypass controls to keep projects moving.

- Local factor: Multi-site coordination across Sparks, Reno, and nearby job locations makes it harder to maintain consistent permissions, device standards, and response processes without centralized oversight.

How To Reduce Breach Risk Without Slowing The Business Down

The fix is not a single security product. It is a controlled cleanup of the daily support environment. Start by reducing ticket backlog, standardizing endpoint configuration, removing shared credentials, and enforcing role-based access for project files, accounting systems, and cloud email. Then validate what is actually recoverable if a mailbox, file share, or line-of-business platform is compromised. Firms that rely on project schedules and billing cycles should also maintain tested managed backup solutions for business continuity so recovery does not depend on assumptions.

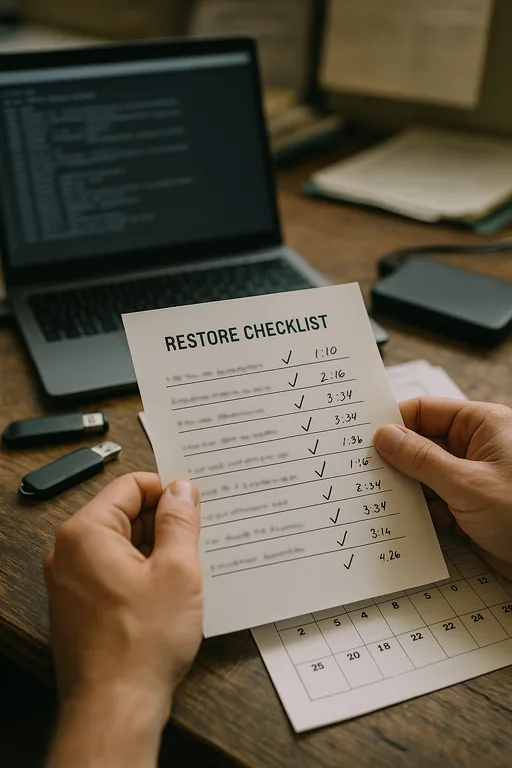

From a remediation standpoint, we focus on identity hardening, endpoint visibility, and recovery discipline. Multi-factor authentication should be enforced across email, remote access, and admin accounts. Endpoint detection and response should be tuned to catch unusual sign-ins, script activity, and file access patterns. Backup jobs should be verified through restore testing, not just green checkmarks. The CISA ransomware and recovery guidance is useful here because it aligns practical controls with incident containment and restoration steps that smaller operations can actually implement.

- Access control: Remove shared accounts, apply MFA, and review permissions by job role and project need.

- Endpoint control: Standardize patching, deploy EDR, and isolate unmanaged devices from core file and finance systems.

- Backup validation: Test restores for email, file shares, and project data on a scheduled basis.

- Alerting improvement: Escalate suspicious sign-ins, mailbox forwarding rules, and unusual file activity before they become outages.

Field Evidence: From Daily Friction To Controlled Recovery

We worked through a similar pattern with a regional contractor operating between Sparks industrial corridors and Reno administrative offices. Before remediation, the environment had recurring login issues, inconsistent laptop builds, and project folders with broad inherited permissions. Staff were spending time reopening tickets for the same problems, and leadership had limited confidence in what would happen if email or file access failed during payroll or month-end billing.

After standardizing endpoint management, tightening identity controls, validating backups, and documenting escalation paths, the business moved from reactive troubleshooting to predictable recovery. Just as important, they added disaster recovery planning for multi-location operations so a single compromised account or file server issue would not stall the entire office. That changed the conversation from constant firefighting to measurable operational resilience.

- Result: Repeat support tickets dropped by 41 percent over one quarter, backup restore validation improved from untested to monthly verified recovery, and project administration teams regained several hours per week that had previously been lost to recurring access issues.

Operational Controls That Matter Most

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Governance Policy And Audit Preparation and has spent his career building practical recovery, security, and operational continuity processes for businesses across Sparks, Reno, and Northern Nevada and Northern Nevada.

Local Support in Sparks, Reno, and Northern Nevada

Construction and industrial businesses around Sparks often need support that understands both office systems and field-driven workflows. From Reno Computer Services at 500 Ryland Street, the route to the Dermody area is typically about 13 minutes, which makes it practical to support organizations that need fast on-site follow-up, coordinated recovery work, and better day-to-day control over the systems that affect billing, scheduling, and audit readiness.

Stabilize The Daily Environment Before The Breach Becomes Visible

For many Sparks construction firms, breach exposure is the downstream result of unresolved daily IT friction. Slow systems, recurring tickets, broad permissions, and untested recovery processes wear down staff discipline and create the conditions for account compromise, data loss, and audit trouble. The visible outage is usually late in the timeline.

The practical takeaway is straightforward: reduce repeat issues, standardize support, tighten identity and access controls, and verify recovery before an incident forces the issue. When those basics are handled consistently, governance policy and audit preparation become easier to maintain, and the business spends less time compensating for preventable technical drag.