Reno Network Crash

When a business is dealing with a network crash, the failure usually started earlier. Unclear ownership, overlapping tools, and fragmented support can weaken business IT operations management over time and leave financial offices in The Truckee Meadows exposed when pressure hits. Addressing the problem means clarifying ownership and enforcing cleaner escalation paths.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Vendor Chaos Turns a Network Problem Into a Business Outage

A network crash in a financial office is often not a single-device failure. More often, we find a chain of unmanaged dependencies: ISP equipment installed without documentation, firewall changes made by one vendor, cloud application assumptions made by another, and no one clearly responsible for the full environment. In The Truckee Meadows, where many offices rely on a mix of internet, VoIP, cloud platforms, and aging on-premise hardware, that gap shows up fast when the office is under pressure.

The vendor chaos problem is operational before it is technical. An office manager should not be coordinating internet, phones, software, and hardware vendors during an outage. That is exactly where structured business IT operations management in Northern Nevada matters. It establishes ownership, defines escalation paths, and keeps one party accountable for the whole incident instead of letting each vendor defend only its own piece. In cases like Weston’s, the real damage comes from delay, not just from the original fault.

- Technical factor: Overlapping vendor responsibilities around firewall routing, switch configuration, ISP handoff equipment, and cloud application access create slow diagnosis and conflicting instructions during a live outage.

- Operational factor: Financial staff lose access to client records, custodial portals, document systems, and billing workflows at the same time, which compounds downtime.

- Business consequence: Delayed appointments, missed processing windows, and incomplete audit trails can create both revenue disruption and compliance exposure.

How to Close the Ownership Gap Before the Next Crash

The fix is not just replacing hardware. The first step is assigning clear operational ownership for the full stack: carrier circuit, firewall, switching, wireless, server dependencies, cloud identity, and line-of-business applications. We typically start by documenting who owns each layer, who has admin access, what the escalation order is, and what evidence must be collected before a ticket is handed off. That reduces finger-pointing and shortens recovery time.

From there, the environment needs technical controls that support clean incident response. That includes current network diagrams, monitored uplinks, configuration backups, tested failover where justified, and server visibility tied into server and hybrid infrastructure management for Reno-area offices . If Microsoft 365, SharePoint, or Entra ID are part of the workflow, those dependencies also need to be tracked because a network event can look like an application outage when identity or sync issues are involved. CISA’s guidance on cybersecurity performance goals is useful here because it reinforces practical controls around asset inventory, secure configuration, backups, and centralized logging.

- Control step: Build a single incident ownership model with documented vendor contacts, admin credential control, network diagrams, monitored alerts, backup configuration exports, and a defined escalation matrix for internet, firewall, switching, and cloud dependencies.

- Control step: Validate failover and recovery procedures quarterly so outage response is based on tested process rather than vendor assumptions.

- Control step: Tie cloud identity, email, and file access into the same operational review so network and application troubleshooting are not handled in isolation.

Field Evidence: Multi-Vendor Failure Across a Sparks Financial Workflow

We have seen this pattern in offices moving between Reno and Sparks where a single branch circuit issue exposed undocumented switch changes, stale firewall rules, and no agreed handoff between the ISP and the managed application vendor. Before remediation, staff were opening tickets with three separate providers while trying to restore phones, internet, and access to shared files. The result was a long outage window and no reliable timeline for recovery.

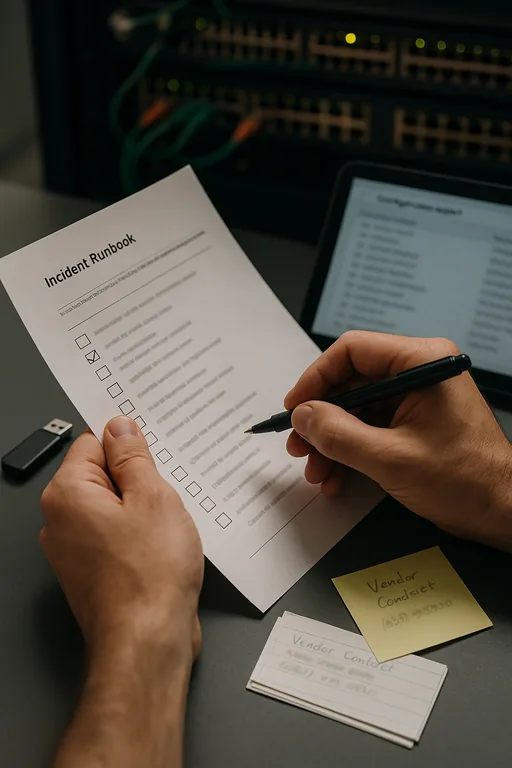

After consolidating documentation, assigning one escalation owner, and aligning Microsoft and file access dependencies through cloud and Microsoft environment management , the same type of incident became much more controlled. Instead of debating ownership, the office had a known runbook, current credentials, and a verified path for restoring connectivity and user access. That is especially important in Northern Nevada business corridors where multi-site coordination and carrier handoffs can slow response if no one is steering the event.

- Result: Mean time to isolate the fault dropped from nearly 3 hours to under 40 minutes, and user-impacting downtime was reduced by more than half during the next comparable connectivity incident.

Operational Controls That Reduce Vendor-Driven Network Failures

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Business It Operations Management and has spent his career building practical recovery, security, and operational continuity processes for businesses across The Truckee Meadows and Northern Nevada.

Local Support in The Truckee Meadows

Reno Computer Services supports businesses across Reno, Sparks, and the surrounding Truckee Meadows with practical oversight for vendor coordination, outage response, and infrastructure accountability. For offices near Greg Street and other industrial and commercial corridors, local proximity matters when a network issue affects phones, internet access, or financial workflows that cannot wait for a remote blame cycle to play out.

Clear Ownership Prevents Repeat Network Failures

When a financial office in The Truckee Meadows suffers a network crash, the visible outage is usually the last stage of a longer management problem. Unclear ownership, fragmented vendors, and undocumented dependencies make recovery slower and more expensive than it needs to be. The practical answer is not guesswork. It is defined accountability, current documentation, and tested escalation paths across internet, infrastructure, and cloud systems.

Businesses that tighten those controls reduce downtime, protect staff productivity, and avoid the recurring cycle where every vendor claims the issue belongs to someone else. That is the difference between reacting to outages and actually managing operations.