Reno Logistics Hub

Seeing operations stopping is often the visible symptom of compliance gaps, not the root problem itself. In logistics hubs across Reno, issues like missing controls, weak documentation, and loose access policies can quietly undermine backup and disaster recovery until work stops or risk spikes. The fix usually starts with reviewing controls, access, and recovery steps before they are tested under pressure.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Compliance Gaps Stop Logistics Operations

When operations stop in a Reno logistics environment, the outage is usually only the final symptom. The deeper issue is often that backup, access control, and recovery documentation no longer match the way the business actually runs. In distribution and freight settings, systems change quickly: shared folders become workflow systems, temporary user accounts stay active too long, and line-of-business data gets copied into locations that are not covered by the same retention or recovery controls. That is where The Compliance Gap shows up in practical terms.

We see this most often when internal IT documentation falls behind changing requirements tied to CMMC, HIPAA, customer contract obligations, or internal audit expectations. A logistics hub may still have backups running, but if restore priorities are undefined, access reviews are incomplete, or recovery testing has not been documented, the business is exposed. That is why organizations dealing with this pattern usually need backup and disaster recovery support in Reno that aligns technical recovery with actual operational dependencies. In cases like Theodore’s, the real failure is not just data availability; it is the absence of controlled, documented recovery under pressure.

- Access control drift: Shared credentials, stale permissions, and undocumented exceptions make it difficult to restore the right users to the right systems during an outage.

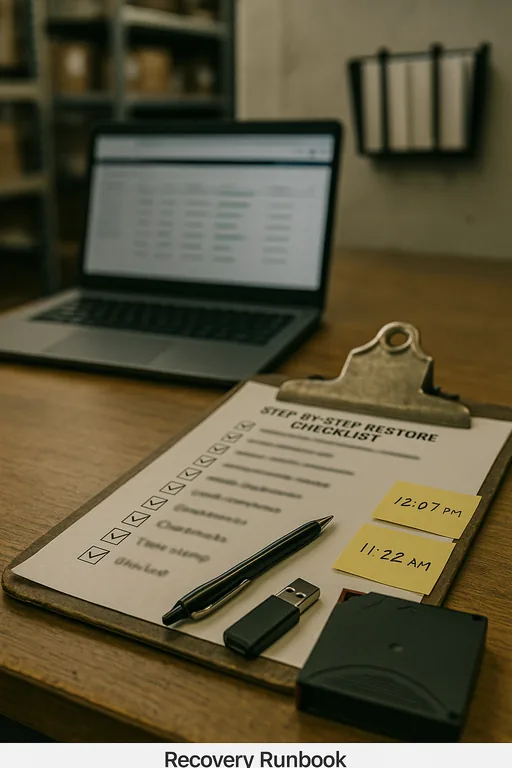

- Documentation gaps: Recovery runbooks often miss newer applications, revised file paths, or updated vendor dependencies, which slows restoration and increases error rates.

- Unverified backups: Backup jobs may report success while excluding critical workflow data, corrupted files, or system states needed for a clean recovery.

- Regulatory mismatch: Requirements can change faster than internal teams update retention, encryption, audit logging, and recovery evidence.

How to Close the Gap Before the Next Outage

The fix starts with treating compliance and recovery as one operating discipline, not two separate projects. First, identify which systems actually keep the logistics floor moving: shipment records, dispatch platforms, inventory databases, label printing, billing exports, and remote access for supervisors or third-party partners. Then map those systems to backup scope, restore order, retention requirements, and access controls. If that map does not exist in writing, recovery will be slower than expected.

From there, the practical work is straightforward but detailed. We typically validate backup coverage, test restores against current production data, review privileged access, and confirm that evidence exists for policy enforcement and recovery testing. Businesses that need stronger day-to-day control often benefit from managed backup solutions for Reno operations so backup monitoring, retention review, and restore validation are not left to chance. For a useful external benchmark, CISA’s ransomware and recovery guidance remains a practical reference because it ties backup integrity, access hardening, and incident response together.

- Backup validation: Run scheduled restore tests against current operational data sets, not just sample files, and document recovery time against business expectations.

- MFA and privileged access review: Reduce standing administrative access, remove stale accounts, and require stronger authentication for remote and elevated sessions.

- Recovery runbooks: Maintain step-by-step restoration procedures for dispatch, billing, file shares, and line-of-business applications with named owners and escalation paths.

- Retention and evidence controls: Align retention periods, audit logs, and test records with contractual and regulatory obligations so recovery is defensible as well as functional.

Field Evidence: Restoring Control at a Reno Freight Corridor Site

In one Northern Nevada case, a multi-shift operation near the airport corridor had backups in place but no reliable proof that the right data could be restored in the right order. Before remediation, supervisors relied on tribal knowledge, file share permissions had expanded over time, and a line-of-business export used by billing was missing from the documented recovery sequence. During a disruption, staff could access some files but could not resume normal dispatch timing, which created a backlog across receiving and outbound scheduling.

After a structured review, the business narrowed backup scope to critical systems, corrected retention settings, documented restore priorities, and added recurring validation tied to its compliance obligations. It also adopted a formal disaster recovery planning process for multi-site operations so warehouse, office, and remote access dependencies were tested together rather than separately. In a later recovery exercise, the site restored core dispatch and billing functions in under 90 minutes, compared with an earlier estimated recovery window of more than five hours.

- Result: Recovery time dropped by roughly 70 percent, backup exceptions were identified before production impact, and audit documentation was available for internal review.

Compliance and Recovery Control Reference for Logistics Hubs

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Backup And Disaster Recovery and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno, Sparks, Carson City, and Northern Nevada and Northern Nevada.

Local Support in Reno, Sparks, Carson City, and Northern Nevada

Reno logistics operations often depend on fast coordination between warehouse floors, dispatch teams, billing staff, and remote users. From our office on Ryland Street, we regularly support businesses across Reno industrial corridors, including Longley Lane and nearby airport-area facilities, where even a short interruption can affect receiving schedules, outbound timing, and customer reporting. Travel time matters, but so does having recovery documentation and control reviews already in place before a site issue turns into a longer operational stop.

Operational Takeaway for Reno Logistics Teams

If a logistics hub in Reno suddenly stops moving, the real problem may be a control failure that has been building for months. Missing documentation, unreviewed permissions, incomplete backup scope, and untested recovery steps create the kind of compliance gap that only becomes visible when dispatch, billing, or inventory work is interrupted.

The practical response is to review recovery controls before the next event, not after. When backup coverage, access policy, retention, and restore testing are aligned with actual business operations, downtime becomes shorter, audit exposure is easier to manage, and the business can recover in a controlled way instead of improvising under pressure.