Reno Encryption

What looks like a one-off issue is often tied to compliance gaps. In manufacturing plant environments, missing controls, weak documentation, and loose access policies can turn into audit findings, fines, and operational disruption long before anyone notices the warning signs. Closing those gaps early makes disaster recovery planning and recovery far more resilient.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Encrypted Files Usually Point to a Compliance Gap

When files are encrypted inside a manufacturing environment, the visible outage is only the surface problem. In Washoe County plants, we often find that the real failure sits underneath: outdated control documentation, excessive user permissions, unverified backups, and recovery plans that do not match current regulatory expectations. That is where the compliance gap shows up. If internal IT documentation has not kept pace with changing requirements such as CMMC obligations for defense-related work or HIPAA handling rules tied to employee or occupational health data, the business may be exposed long before the encryption event is discovered.

The operational risk is straightforward. A plant can still be producing parts, shipping orders, and passing informal internal checks while critical systems remain poorly governed. Then one compromised account, one unpatched endpoint, or one shared credential on the production floor turns into encrypted file shares and a scramble to identify what can be restored. That is why structured disaster recovery planning and recovery in Northern Nevada matters. It ties technical recovery to documented business priorities, so the response is not improvised under pressure. In cases like Ross’s, the missing piece is rarely a single tool. It is the absence of enforced controls that connect security, compliance, and plant operations.

- Access governance: Shared folders, legacy accounts, and broad permissions allow one compromised credential to affect engineering files, purchasing records, and production documentation at the same time.

- Documentation drift: Internal policies often lag behind real system changes, especially when regulations evolve faster than the team can update procedures and evidence.

- Backup assumptions: Many plants have backups running, but they have not validated restore order, retention, or integrity against current operational and compliance needs.

- Audit exposure: Once encrypted files affect controlled records, the issue can expand from downtime into reportable compliance findings, delayed customer commitments, and outside review.

How to Close the Gap Before the Next Recovery Event

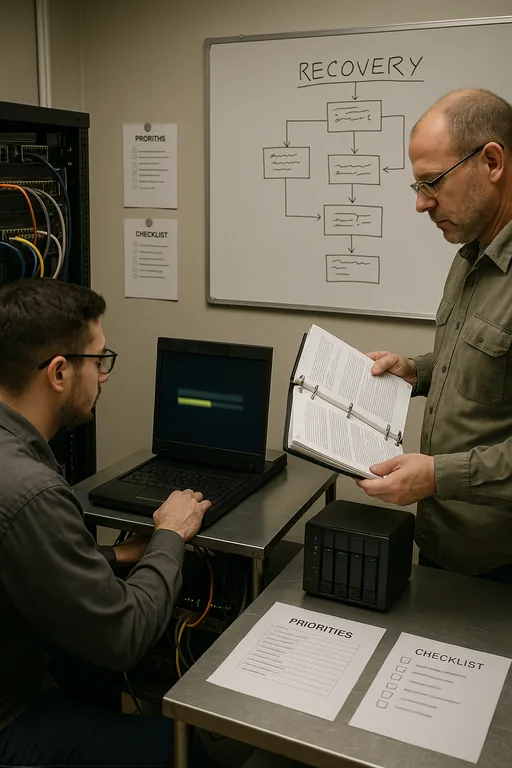

The fix is not just decrypting or restoring files. The practical response starts with containment, then moves into control correction. We typically isolate affected endpoints, review authentication logs, confirm whether lateral movement reached file servers or line-of-business systems, and map impacted data to business functions. From there, the recovery plan has to be rebuilt around actual plant priorities: production scheduling, quality records, shipping documents, ERP access, and any regulated data sets that require retention or reporting.

Long-term resilience comes from documented controls that can be tested. That includes periodic access reviews, MFA hardening for remote and administrative accounts, backup immutability where appropriate, and restore testing tied to recovery time objectives. Plants that need stronger evidence and retention discipline usually benefit from compliance-focused backup continuity controls so backup success is measured by recoverability, not just job completion. For a practical control baseline, the CISA ransomware guidance remains useful because it ties prevention, response, and recovery into one operational framework.

- Privilege reduction: Remove stale accounts, separate admin access, and limit file share permissions by role and plant function.

- Backup validation: Test restores against current production data sets, not just sample files, and document recovery order for critical systems.

- MFA hardening: Enforce phishing-resistant access where possible for remote access, VPN, cloud admin portals, and privileged accounts.

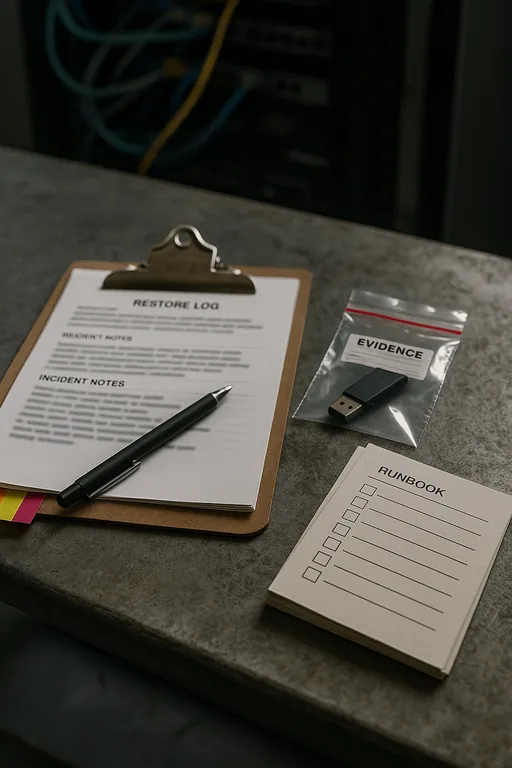

- Evidence management: Keep current policies, restore logs, access reviews, and incident records so compliance proof exists before an audit request arrives.

Field Evidence: Restoring Operations Without Repeating the Same Failure

We have seen this pattern in industrial corridors around Reno and Sparks where a plant believed it had adequate protection because backups existed and antivirus was active. Before remediation, the environment usually showed broad shared access, inconsistent endpoint standards, and no clear ranking of which systems had to come back first. After remediation, the business had a documented recovery sequence, tested restore points, segmented access for production and office users, and clearer evidence for outside review.

One Northern Nevada operation with mixed office and warehouse workflows reduced restore decision time from several hours to under 45 minutes after formalizing system priorities and validating backup sets monthly. That same environment also used structured backup and recovery programs for multi-site operations to separate critical records from general file storage, which made post-incident review cleaner and reduced unnecessary downtime during recovery.

- Result: Faster restoration of priority systems, cleaner audit evidence, and a measurable drop in unplanned downtime tied to file access incidents.

Compliance and Recovery Control Reference for Manufacturing Plants

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Disaster Recovery Planning And Recovery and has spent his career building practical recovery, security, and operational continuity processes for businesses across Washoe County and Northern Nevada.

Local Support in Washoe County

Manufacturing and industrial businesses in Washoe County often need fast coordination between office systems, plant-floor documentation, backup recovery, and compliance evidence. From our Reno office, the South Virginia Street corridor is only a short drive, which helps when an encrypted-file event needs both immediate triage and a disciplined review of the controls that allowed it to happen.

Closing the Compliance Gap Before It Becomes a Repeat Incident

Encrypted files in a manufacturing plant are rarely just a malware problem. In most cases, they expose weak access control, incomplete documentation, and recovery processes that were never tested against current compliance obligations. That is why the right response includes both technical restoration and a control review that stands up operationally and during outside scrutiny.

For Washoe County manufacturers, the practical takeaway is simple: if recovery steps, backup evidence, and access policies are not current, the next outage will be harder to contain and more expensive to explain. Closing the compliance gap early improves recovery speed, reduces audit friction, and keeps plant operations from depending on guesswork.