Network, Server & Cloud Management in Dayton, Nevada

Network, server, and cloud management keeps Dayton businesses operating when users, applications, and data depend on multiple systems working together. The real value is steady uptime, controlled access, faster issue response, and fewer surprises during outages or growth.

At 9:12 a.m., Anthony T. discovered that a neglected core switch had dropped a Dayton office off its ERP server and cloud inventory sync; shipping stopped for the day, technicians rebuilt the network from old notes, and the interruption cost $71,500.

The following scenario is based on a redacted real-world business IT incident pattern. Identifying details have been changed for privacy, but the disruption sequence and cost impact remain realistic.

The guidance below is intended to help business leaders evaluate infrastructure management and ask better operational questions. This is general technical information; specific network environments and compliance obligations change strategy.

Network, server, and cloud management is the coordinated operation of the systems that move traffic, run business applications, store data, authenticate users, and connect local offices to Microsoft 365, line-of-business platforms, and remote staff. In practice, this means routers, firewalls, switches, wireless, virtualization hosts, servers, cloud identities, patching, logs, and escalation workflows are managed as one operating environment rather than as isolated tools.

For many Dayton businesses, the environment is hybrid. A file server or line-of-business application may still sit onsite, while email, collaboration, identity, and some storage extend into the cloud. That mix can work well, but it often breaks down when nobody owns the handoff between the office network, the server platform, and the cloud tenant. That is why businesses often pair infrastructure oversight with managed IT support in Dayton and, when sensitive records are involved, broader compliance and risk management planning.

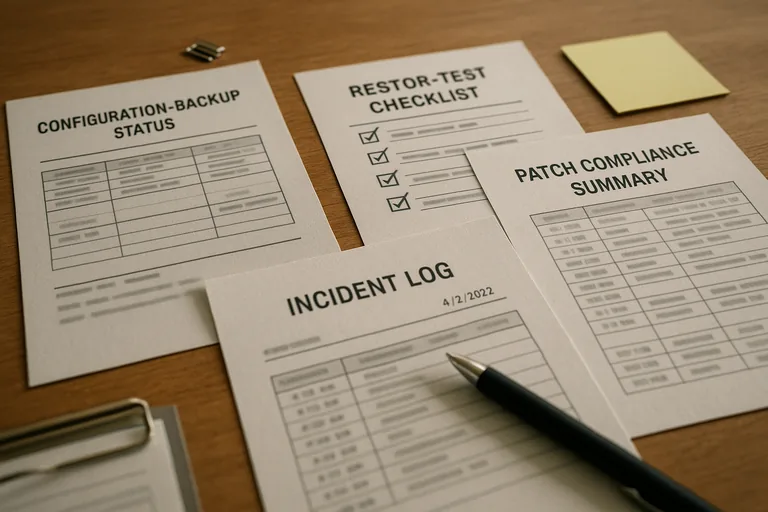

A common failure point is assuming uptime comes from buying reliable hardware. Mature environments depend more on disciplined operations: current asset inventory, approved changes, patch compliance, configuration backups for network equipment, tested failover paths, documented admin access, and monitoring that tells someone what happened before users start guessing. Without that baseline, minor warnings turn into avoidable outages.

What does network, server, and cloud management actually include for a Dayton business?

It includes the full lifecycle of the systems behind daily work: internet edge devices, firewalls, switches, wireless coverage, servers, virtualization platforms, storage, cloud tenants, user identities, permissions, patching, certificates, logs, and vendor coordination. What usually separates a stable environment from a fragile one is not the number of tools but whether those pieces are managed together. If the firewall is updated, the cloud admin accounts are reviewed, the server storage is monitored, and the application vendor knows where the dependencies sit, interruptions tend to stay smaller. If those areas are handled by different people with no shared documentation, even a routine change can break authentication, printing, file access, or remote work.

Why does this matter to uptime, security, and business continuity?

If a cloud admin account is compromised, a virtual host runs out of storage, or a network device fails without a recent configuration backup, operations can stop even though the internet itself is still up. Infrastructure management matters because the business consequence is rarely technical inconvenience alone; it can affect payroll processing, scheduling, shipping, customer response times, and exposure of protected information. For Nevada businesses handling personal data, Nevada Revised Statutes NRS 603A is relevant because it requires reasonable security measures and creates legal obligations when protected data is exposed. In business terms, stable network, server, and cloud management supports both continuity and accountability.

Which risks does competent management reduce before they become outages?

A common failure point is hidden concentration of risk. That includes flat networks where every device can talk to every other device, stale administrator accounts that were never removed, unsupported server operating systems, cloud tenants with inconsistent multifactor enforcement, and switches or firewalls running for years without verified configuration backups. As remote access and cloud platforms expand, guidance from NIST SP 800-207 Zero Trust Architecture matters because it treats every access request as something to verify, not assume. In practice, that translates into segmented access, limited privileges, device-aware controls, and fewer paths for a compromised account to move laterally and disrupt operations.

How does strong network, server, and cloud management work in practice?

In mature environments, monitoring watches interface errors, storage growth, failed services, certificate expiry, abnormal sign-in activity, backup status, and resource pressure on virtualization hosts, but the tool is only part of the answer. A competent process also defines who owns the alert, how severity is assigned, what gets checked first, when escalation occurs, and what gets documented afterward. During a routine review, repeated Kerberos and time-drift alerts on a line-of-business server led to a closer look at the host; the underlying issue was an old snapshot consuming storage and slowing the virtual platform, and no change record explained why it still existed. The lesson was straightforward: monitoring works only when it is paired with change discipline, cleanup procedures, and regular health checks across both servers and the platform underneath them.

How can a business verify that management is being done properly?

A competent provider should be able to show current network diagrams, asset inventory records, patch compliance reports, configuration backup status for firewalls and switches, alert escalation logs, access review records, and documented recovery test results for critical workloads. In practice, the issue is rarely the tool alone; it is the process around it. If leadership asks who reviewed a failed sign-in alert, when a server was last patched, whether the internet failover was tested, or how a switch would be rebuilt after failure, the answer should come from records, not memory. Real oversight leaves evidence, and that evidence is what makes accountability visible during audits, outages, and vendor disputes.

When does weak implementation become dangerous?

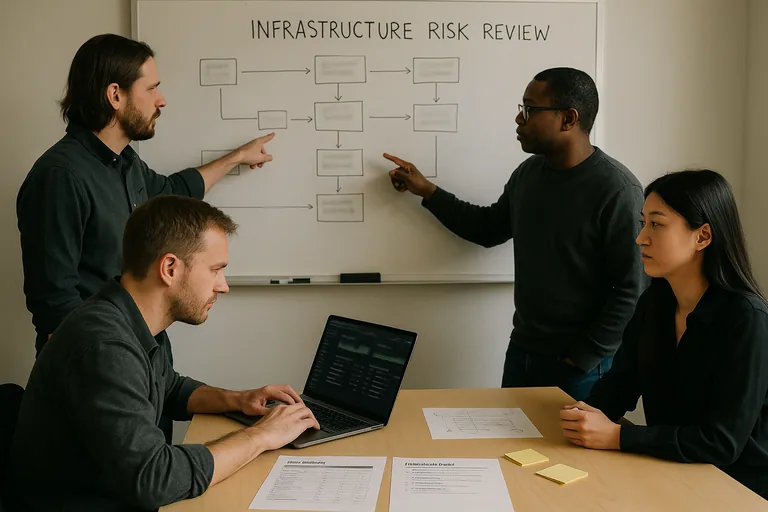

What should leadership do next if the environment feels uncertain?

Leadership should ask for a short infrastructure risk review that identifies critical systems, single points of failure, unsupported assets, privileged accounts, monitoring gaps, and recovery dependencies. The goal is not a large project list; it is a prioritized operating picture showing what could interrupt payroll, scheduling, shipping, or customer service first and what evidence exists that the controls are working. For organizations that need ongoing oversight, comparing that review against documented Dayton infrastructure management support can help determine whether the current environment is professionally maintained or simply continuing until the next incident exposes the gaps.