Network, Server & Cloud Management

Network, server and cloud management keeps business systems available, patched, monitored and recoverable so staff can work, data stays protected and infrastructure problems are found early instead of during an outage or security event.

On a Monday shipping cutoff, Aiden X.‘s distributor lost its order system when stale hypervisor snapshots filled a datastore and crashed two virtual servers. Nine hours of halted pick-pack-ship work, emergency recovery, and missed same-day orders drove the operational loss to $55,600.

The following scenario is based on a redacted real-world business IT incident pattern. Identifying details have been changed for privacy, but the disruption sequence and cost impact remain realistic.

Scott Morris is a managed IT and cybersecurity professional who helps businesses manage and secure networks, servers, cloud platforms, backup strategy, recovery readiness, and the operational controls that keep infrastructure stable under pressure. Scott Morris has 16+ years of managed IT and cybersecurity experience. That background is directly relevant to Network, Server & Cloud Management because weak environments rarely fail for one reason alone; they usually break where monitoring, patching, access control, documentation, and response ownership have drifted apart. His work is grounded in practical risk reduction, business continuity, secure infrastructure management, recovery readiness, and operational resilience for business technology environments, including Reno and Sparks organizations that need stable and compliant systems.

This article is intended to help decision-makers understand common infrastructure risks, controls, and verification steps. This is general technical information; specific network environments and compliance obligations change strategy.

At the operational level, network, server and cloud management means owning the condition of the systems that move data, host applications, authenticate users, and carry recovery traffic when something breaks. It covers firewalls, switches, wireless, VPNs, virtual hosts, storage, directory services, line-of-business servers, and cloud administration, along with the monitoring and documentation that tie those layers together.

- Visibility: Asset inventory, monitoring, and log collection show what exists, what is failing, and who owns response.

- Discipline: Patch cycles, change control, account reviews, and configuration baselines reduce quiet drift that later becomes downtime or exposure.

- Recovery: Backups, replication, restore testing, and failover procedures determine whether disruption stays manageable or becomes a business stoppage.

A common failure point is assuming these layers are separate. In practice, the issue is rarely the tool alone; it is the process around it. A switch may be healthy while DNS is stale, cloud permissions are excessive, or a routine reboot exposes an application dependency nobody documented. That is why mature environments usually combine infrastructure oversight with managed IT services that include escalation workflows, change records, and accountability rather than only reactive break-fix support.

What does network, server and cloud management actually include?

It includes the connective infrastructure between users and applications: firewalls, switching, wireless, internet circuits, VPNs, domain services, virtualization hosts, storage, certificates, service accounts, cloud tenancy settings, and the servers that run core business systems. It also includes lifecycle work such as capacity planning, firmware and operating system patching, configuration review, vendor coordination, and documented change control. Helpdesk support may solve symptoms, but this discipline owns the health of the environment underneath the ticket volume.

Why does this matter to uptime, security and cost?

Which risks does competent management reduce?

Competent management reduces three recurring risks: unplanned downtime from single points of failure, security exposure from over-trusted access paths, and recovery delays caused by undocumented dependencies. Guidance from NIST SP 800-207 Zero Trust Architecture matters here because modern environments cannot assume a user or device is safe simply because it is on the internal network or connected through a VPN. In practice, that means segmenting sensitive systems, limiting administrative reach, reviewing service account use, and applying conditional access so one stolen credential does not become unrestricted lateral movement across servers and cloud resources.

How does it work in practice day to day?

In mature environments, day-to-day management runs on routine rather than heroics: health checks, capacity alerts, patch windows, configuration backups, ticket-linked changes, and clear escalation paths when thresholds are crossed. During a routine infrastructure review, a monitoring system flagged rising authentication delays on a file server; investigation showed the server was still querying a decommissioned DNS forwarder across a site-to-site tunnel after a firewall change. The lesson was not that DNS failed, but that dependency mapping, post-change validation, and alert triage were missing. Real evidence of competent execution includes current monitoring dashboards, patch compliance reports, change records tied to maintenance windows, and alert escalation logs showing who acknowledged an issue and when.

How can a business tell whether its environment is being managed competently?

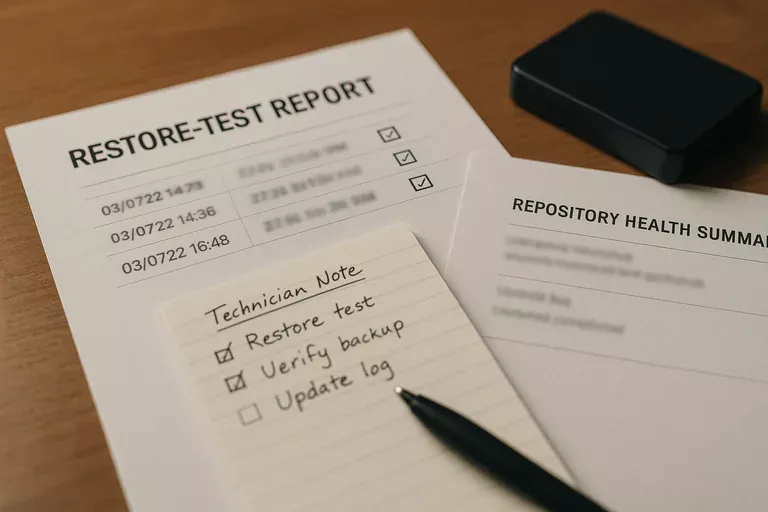

One of the first things experienced IT teams check is whether the environment can produce records on demand. A business evaluating infrastructure management practices should be able to review a current asset inventory, administrative account list, network diagram, patch status by device class, backup restore test results, and documented exception handling for systems that cannot be updated on the normal cycle. If those artifacts do not exist, or if they are months out of date, the environment is probably being managed by memory and habit rather than by accountable process.

When does weak implementation become dangerous?

Weak implementation becomes dangerous when tools exist without ownership. During incident response, it is common to discover cloud alerts forwarding to an unattended mailbox, switch configurations never archived, local administrator passwords reused across servers, or internet failover installed but never exercised under load. This tends to break down when a provider says monitoring is active but cannot show alert review cadence, when patching is reported as enabled but exception lists are uncontrolled, or when backups are described as successful even though no recent restore test proves the applications can actually be brought back in the right order.

What should leadership do next?

Leadership usually does not need more tooling first; it needs a clear picture of ownership, evidence, and business priority. Start with the systems that would stop revenue, payroll, shipping, patient care, or client delivery if they failed, then ask whether the current team or managed IT services provider can show who monitors them, how access is reviewed, what the recovery sequence is, and how quickly normal operations can be restored. What usually separates a stable environment from a fragile one is not the hardware brand or cloud logo but the discipline to document, verify, and rehearse.