Reno Logistics Fix

This kind of issue rarely appears all at once. For logistics hubs in Northern Nevada, it usually builds through unclear ownership, overlapping tools, and fragmented support and then surfaces as operations stopping, slower recovery, or higher exposure. A more reliable setup starts with clarifying ownership and enforcing cleaner escalation paths.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Vendor Chaos Stops Logistics Operations

The immediate problem is not usually a single failed device. In most Northern Nevada logistics environments, the real failure is fragmented ownership. Internet service may sit with one provider, VoIP with another, warehouse or dispatch software with a third, endpoint support with a fourth, and backup responsibility with no one clearly accountable. When an outage crosses those boundaries, each vendor points to another layer, and operations stall while internal staff try to coordinate technical triage they were never hired to manage.

We see this often in fast-moving facilities from Reno to Sparks and up toward Lemmon Valley, where shipping deadlines, receiving windows, and billing cutoffs leave little room for confusion. If backup jobs, server roles, and line-of-business application dependencies are not documented under a single operational plan, recovery becomes slower and riskier. That is why many organizations stabilize these environments through backup and recovery programs in Northern Nevada that define ownership, test restoration paths, and map each vendor to a clear escalation sequence. In cases like the one above, the issue that stopped dispatch was not just connectivity; it was the absence of one accountable process tying systems, support, and recovery together.

- Technical factor: Overlapping vendors created unclear responsibility for internet failover, application access, and backup validation, which delayed root-cause isolation and extended operational downtime.

- Business consequence: Shipment processing, staff coordination, and same-day billing slowed because no one had authority to direct all vendors under a single incident workflow.

- Local reality: Multi-building warehouse layouts, carrier timing pressure, and mixed provider footprints in Northern Nevada make loosely managed support relationships break down faster under strain.

How To Remediate The Breakdown And Restore Control

The fix starts with governance before tooling. Every critical system needs a named owner, a support matrix, and a documented escalation path that identifies who handles internet, voice, endpoints, cloud applications, backup infrastructure, and after-hours response. Once that is in place, we typically reduce overlap by standardizing alerting, consolidating administrative access, and documenting application dependencies so recovery does not rely on memory during an outage.

From a technical standpoint, logistics hubs benefit from tested restore procedures, internet failover review, role-based admin control, and stronger user protection around email and identity. A practical next step is tightening identity and email security for warehouse operations so password resets, MFA failures, and compromised inboxes do not become another vendor handoff problem during an incident. For operational guidance on incident preparation and recovery planning, the CISA ransomware and recovery guidance is a useful baseline even when the event is not ransomware-driven, because the same discipline applies to containment, communication, and restoration.

- Control step: Build a single incident ownership model with vendor contacts, escalation order, recovery priorities, and restoration testing tied to business functions such as dispatch, receiving, and billing.

- Practical action: Validate backups against actual line-of-business systems, harden MFA and admin access, review failover connectivity, and centralize alerting so one team can coordinate response instead of four vendors debating scope.

Field Evidence: From Multi-Vendor Confusion To Controlled Recovery

In one Northern Nevada freight and distribution environment, the original condition was familiar: separate providers for connectivity, phones, endpoint support, and warehouse software, with no shared incident process. A circuit issue triggered application failures, but the software vendor blamed the firewall, the firewall vendor blamed the ISP, and internal staff spent hours relaying screenshots and ticket numbers between parties. During peak receiving windows, that kind of delay can back up dock scheduling and postpone same-day confirmations.

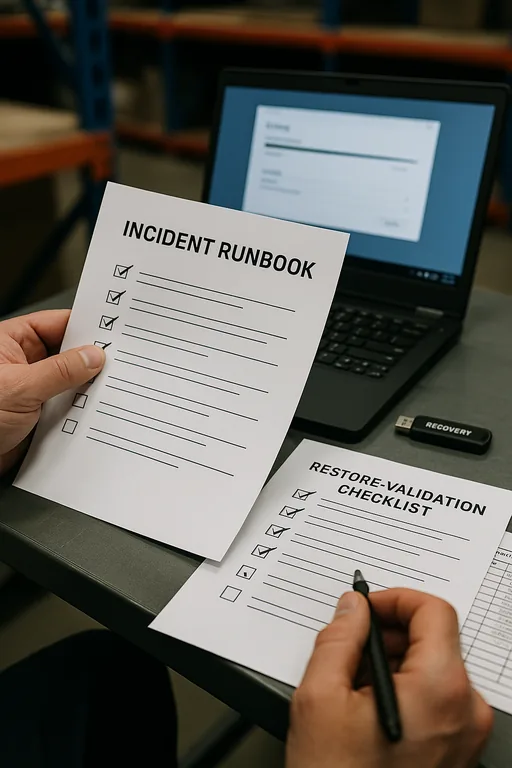

After consolidating escalation ownership, documenting dependencies, and adding clearer security monitoring and response for business systems , the next service interruption was handled differently. Instead of chasing multiple help desks, the team worked from one runbook, isolated the failing dependency quickly, and restored core operations before the delay spread into end-of-day reconciliation. That is the difference between a technical issue and a business stoppage. It is also why Tami-style incidents tend to repeat until ownership is cleaned up.

- Result: Incident coordination time dropped from most of a workday to under 90 minutes, and backup verification improved from ad hoc checks to scheduled documented restore testing.

Operational Control Reference For Vendor-Driven Outages

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Backup And Recovery Programs and has spent his career building practical recovery, security, and operational continuity processes for businesses across Northern Nevada and Northern Nevada.

Local Support in Northern Nevada

Logistics operators in Reno, Sparks, Lemmon Valley, and surrounding industrial corridors often need support that understands both the technical stack and the vendor coordination problem behind it. From our office in Reno, the drive to the Patrician Way area is typically about 25 minutes, which makes local response practical when a warehouse, dispatch office, or shipping operation needs onsite troubleshooting tied to a broader recovery plan.

Clear Ownership Prevents Small Failures From Becoming Full Stops

When logistics operations stop because vendors are disorganized, the real issue is usually not just hardware, software, or connectivity. It is the absence of a controlled operating model. If no one owns escalation, backup validation, identity protection, and restoration sequencing, routine incidents spread into dispatch delays, idle labor, and slower billing.

For Northern Nevada logistics hubs, the practical takeaway is straightforward: define ownership, reduce overlap, test recovery, and make sure every critical system has a documented path from alert to restoration. That approach shortens downtime, reduces confusion, and gives operations teams a process they can rely on when systems are under pressure.