Reno Financial IT Fix

Seeing a network crash is often the visible symptom of growth outpacing IT capacity, not the root problem itself. In financial offices across Reno, issues like endpoint sprawl, underplanned infrastructure, and inconsistent standards can quietly undermine IT support and help desk until work stops or risk spikes. The fix usually starts with standardizing how new users, devices, and systems are brought online.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Growth Triggers the Scalability Ceiling Before the Network Actually Fails

A network crash in a Reno financial office is rarely caused by one bad cable or one overloaded device. More often, it is the point where accumulated growth finally exceeds the assumptions built into the original environment. A firm may start with a modest user count, a flat network, a basic firewall, and a few shared applications. Then it adds advisors, support staff, scanners, wireless devices, compliance tools, cloud sync platforms, and remote access requirements. If those additions happen without a standard onboarding process, the environment becomes harder to support and easier to break.

This is the scalability ceiling. We typically see it when hiring plans move faster than infrastructure planning. In practical terms, the help desk starts seeing recurring printer failures, intermittent slowness, IP conflicts, login delays, and unstable wireless before the larger outage arrives. For firms trying to maintain client responsiveness and documentation accuracy, structured IT support and help desk in Reno becomes less about fixing tickets and more about controlling growth before it creates downtime. In Eva’s case, the visible crash was only the final symptom of unmanaged expansion across users, endpoints, and network dependencies.

- Endpoint sprawl: New laptops, phones, scanners, and unmanaged peripherals consume switch ports, wireless capacity, and support time when they are added without a standard build process.

- Underplanned infrastructure: Small business firewalls, aging switches, and limited DHCP ranges often work until a hiring wave or software rollout pushes them past stable operating limits.

- Inconsistent standards: Different naming conventions, ad hoc permissions, and one-off workstation setups make troubleshooting slower and increase the chance of access and policy errors.

- Financial workflow sensitivity: In advisory and accounting environments, even short outages affect document access, client scheduling, secure communications, and billing timelines.

How to Remediate the Crash and Prevent the Next One

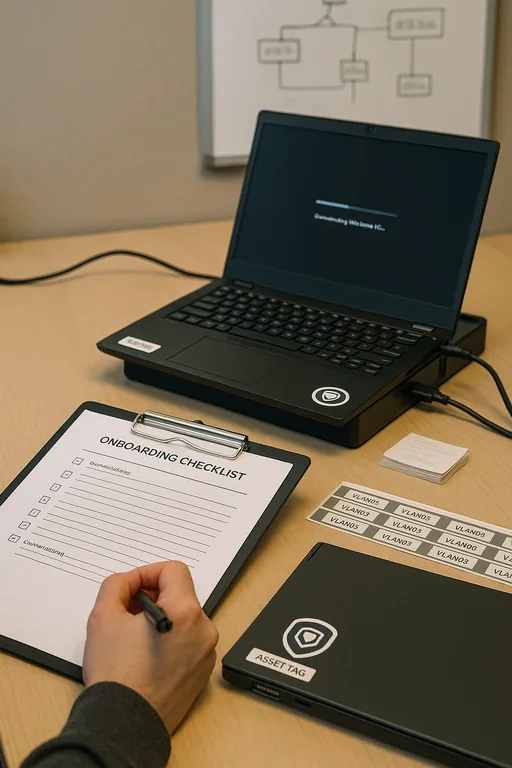

The immediate fix is to restore stable connectivity, identify the choke point, and verify that authentication, file access, and line-of-business applications are functioning normally. The longer-term fix is to standardize growth. That means every new employee, workstation, mobile device, and software platform should follow the same provisioning path, network policy, and security baseline. Financial offices do better when onboarding is treated as infrastructure planning, not just an HR event.

We usually recommend segmenting critical systems, reviewing switch and firewall utilization, expanding address management where needed, and documenting a repeatable deployment standard for users and devices. Firms that also depend on compliance retention and recovery should align those controls with compliance-focused IT management so outages do not become data integrity problems. For practical guidance on resilience planning, the CISA resilience and recovery guidance is a useful reference even outside ransomware scenarios because it reinforces tested backups, access control, and recovery discipline.

- Standardized onboarding: Build every new user and endpoint from the same checklist, including naming, permissions, MFA, patching, EDR, and application access.

- Capacity review: Audit switch utilization, firewall throughput, wireless density, and DHCP scope usage before the next hiring phase.

- Network segmentation: Separate staff workstations, guest wireless, printers, and sensitive financial systems with VLANs and access policies.

- Backup validation: Confirm that file shares, cloud data, and configuration backups are recoverable, not just scheduled.

Field Evidence: Stabilizing a Growing Reno Financial Workflow

We worked with a professional office corridor environment in Reno where staff growth had outpaced the original network design. Before remediation, the office was seeing recurring login delays, dropped wireless sessions in conference rooms, and intermittent access to shared document repositories during peak morning activity. The pattern was especially noticeable on busy days when multiple advisors were uploading reports while administrative staff were scanning intake packets.

After standardizing workstation deployment, replacing an undersized switch stack, separating guest and production traffic, and documenting recovery priorities, the office moved from reactive troubleshooting to predictable operations. Just as important, leadership had a clearer path for adding future staff without recreating the same failure pattern. To support that, they also formalized backup and recovery programs for business continuity so a network event would not cascade into a records access problem.

- Result: Unplanned connectivity incidents dropped significantly, new-user setup time was reduced by more than 40 percent, and the office completed month-end processing without another network-wide interruption.

Reference Points for Financial Office Network Scalability

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in It Support And Help Desk and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno and Northern Nevada.

Local Support in Reno

We regularly support offices across Reno where growth, compliance requirements, and client-facing deadlines all depend on stable systems. From downtown to South Reno corridors like Kietzke, response planning matters because even a short outage can disrupt scheduling, document access, and advisor productivity. The route below reflects the local service relationship between our office and the business area discussed in this article.

Standardize Growth Before the Next Outage Forces the Issue

A network crash in a financial office usually means the environment has already been under strain for some time. The real issue is not just the outage event. It is the lack of consistent standards for adding people, devices, applications, and recovery controls as the business grows.

For Reno firms, the practical takeaway is straightforward: review capacity before the next hiring phase, standardize onboarding, and validate recovery processes before a busy reporting or client-service period exposes the weak point. That approach reduces downtime, protects staff productivity, and keeps support operations predictable.