Reno Finance Crash Test

Seeing a network crash is often the visible symptom of untested backups, not the root problem itself. In financial offices across Reno, issues like failed restore tests, missing dependencies, and an unclear recovery order can quietly undermine compliance advisory programs until work stops or risk spikes. The fix usually starts with validating backups regularly and proving recovery before a real outage.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why a Network Crash Often Points to a Failed Resilience Test

In a Reno financial office, a network crash rarely starts and ends with switching hardware or internet service. More often, the visible outage exposes a resilience gap that has been sitting in the background for months: backups exist, but no one has proven they can restore the full operating environment in the sequence the business actually needs. That distinction matters. A backup is only a copy. Business continuity is the ability to keep working, or recover in a controlled way, while critical systems are down.

We typically find three root causes. First, restore tests are either never performed or are limited to checking whether a file can be opened. Second, dependencies are missed, such as domain services, mapped drives, SQL instances, cloud sync tools, or compliance archives that must come back online before staff can work. Third, there is no documented recovery order, so during an outage the team is guessing. In regulated environments, that can affect record access, supervisory review, retention workflows, and reporting. For firms that rely on compliance advisory programs in Reno , the resilience test is not a side task; it is part of operational control.

That is why the resilience test gap becomes so expensive. A server may be restored, but if authentication is broken, if the document management platform cannot reconnect, or if a cloud connector fails after the restore, the office is still effectively down. In the case above, Declan did not need a better backup slogan. She needed proof that the office could recover in a real-world sequence under pressure.

- Untested restore chain: Backup jobs may report success while application dependencies, permissions, DNS, line-of-business databases, and cloud integrations still fail during an actual recovery event.

- Unclear recovery order: Financial offices often need identity services, file access, compliance archives, and business applications restored in a specific sequence before advisors and support staff can resume work.

- Operational blind spots: Multi-vendor environments using local internet providers, Microsoft 365, and on-premise systems can hide failure points until a real outage forces everything to reconnect at once.

How to Fix the Resilience Test Gap Before the Next Outage

The practical fix is to move from backup confidence to recovery proof. That starts with scheduled restore validation, not just backup completion alerts. We recommend testing representative restores for file shares, virtual machines, authentication services, and the applications that support client records, billing, and supervisory workflows. For many firms, that also means reviewing how Microsoft 365, local file systems, and identity services interact, especially when hybrid environments are involved. Structured cloud and Microsoft environment management helps reduce the common gap between what was copied and what can actually be brought back online in working order.

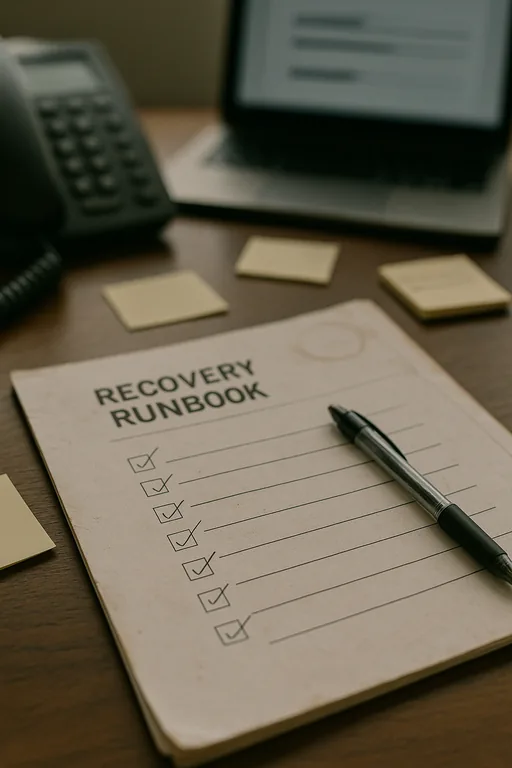

Remediation should also include a written recovery runbook with system priority, dependency mapping, owner assignments, and target recovery times. If a financial office has to restore under pressure, the team should know what comes up first, what can wait, and how to verify each stage. The CISA ransomware and recovery guidance is useful here because it emphasizes tested backups, restoration planning, and validation rather than assumptions.

- Restore validation: Test backups on a schedule using real systems, not just sample files, and confirm applications open with correct permissions and data integrity.

- Dependency mapping: Document domain services, databases, shared storage, cloud connectors, and compliance tools that must be restored in sequence.

- MFA and identity hardening: Protect administrative access so recovery actions are not delayed by compromised credentials or emergency privilege confusion.

- Backup immutability and alerting: Use protected backup storage and alerting that flags failed jobs, repository issues, and restore-test exceptions early.

Field Evidence: Recovery Order Matters More Than Backup Status

We worked through a similar Northern Nevada scenario where a professional office had nightly backups showing green across the board, yet a real outage near South Reno still left staff unable to work for hours because file services restored before identity and application dependencies were ready. The original assumption was that the network had failed. The actual issue was that the environment had never been tested as a full business recovery process.

After remediation, the office had a documented restore order, quarterly validation tests, and clearer infrastructure ownership across servers, cloud services, and edge equipment. That kind of planning is especially important for firms with mixed on-premise and hosted systems, and for organizations relying on IT systems for multi-location operations where one outage can affect advisors, administrators, and remote staff at the same time.

- Result: Recovery verification time dropped from most of a business day to under 90 minutes for priority systems, with fewer manual workarounds and a cleaner audit trail for operational review.

Resilience Test Reference Points for Financial Offices

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Compliance Advisory Programs and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno and Northern Nevada.

Local Support in Reno

Our office on Ryland Street supports businesses throughout Reno, including financial offices operating around the Reno Experience District. For firms dealing with backup validation, recovery planning, or a suspected network failure, local proximity matters because the first step is often separating a connectivity issue from a broader resilience failure. The route to this service area is typically about 7 minutes under normal conditions, which helps when an office needs fast on-site verification of restore order, infrastructure dependencies, and business continuity priorities.

What Financial Offices Should Take Away

A network crash in a Reno financial office is often the moment an untested recovery plan gets exposed. If backups have not been validated against real dependencies, the business may still be down even when the data technically exists. That is the resilience test gap: the difference between having copies and being able to resume operations in a controlled, auditable way.

The practical response is straightforward. Test restores on a schedule, document recovery order, verify application dependencies, and treat continuity as an operating process rather than a storage task. When those controls are in place, outages become easier to contain, compliance pressure is easier to manage, and staff can return to work with less confusion and less downtime.