Reno Lockout Help

What looks like a one-off issue is often tied to untested backups. In medical practice environments, failed restore tests, missing dependencies, and an unclear recovery order can turn into recovery time, data availability, and business continuity long before anyone notices the warning signs. Closing those gaps early makes IT support and help desk far more resilient.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Lockouts Often Point to a Resilience Test Failure

A medical practice in Washoe County can appear fully protected on paper and still fail under real outage conditions. That is the core issue behind the resilience test gap. A backup file may exist, but if no one has validated restore order, application dependencies, credential access, and recovery timing, the organization does not actually know whether it can resume operations. In healthcare settings, that difference matters quickly because scheduling, intake, chart access, billing, and document workflows are tightly connected.

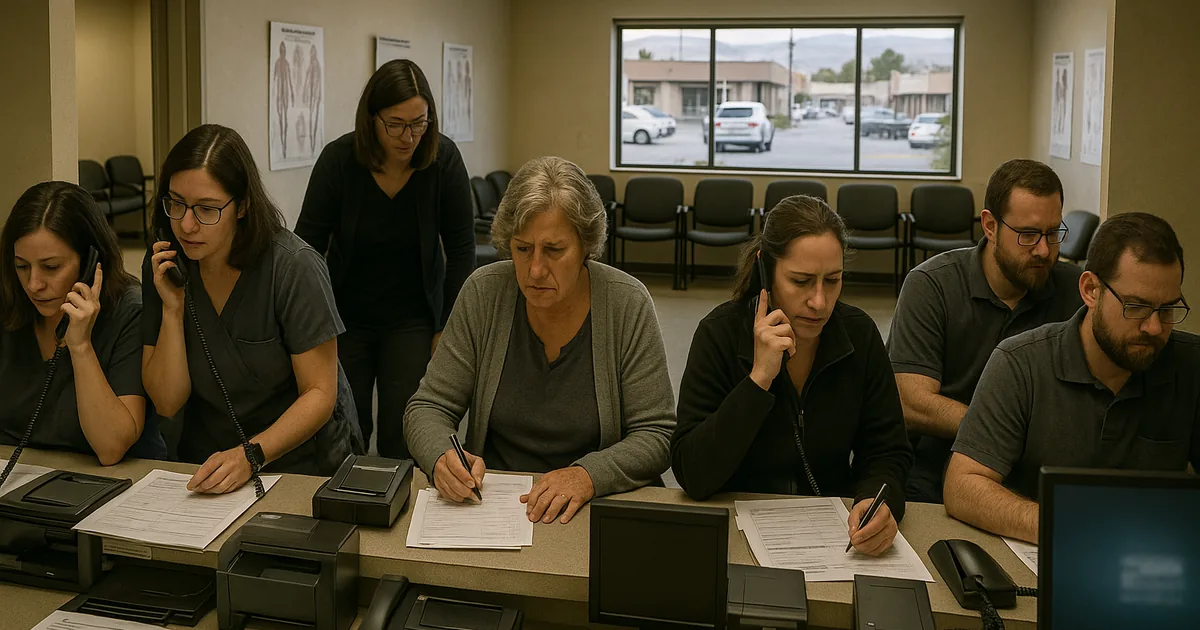

We typically find that the first symptom is not a dramatic disaster. It is a lockout, a failed login after a server reboot, a corrupted virtual machine, or an application database that will not mount cleanly. Once that happens, the practice discovers that the backup was only a copy, not a tested recovery process. That is why businesses dealing with recurring support interruptions often need structured IT support and help desk in Reno that includes restore validation, not just ticket response. In cases like Taisha’s, the immediate outage is only the visible part of a larger continuity problem.

- Untested restore chain: Backup jobs may complete successfully while critical services such as SQL instances, domain authentication, EHR connectors, printers, scanning workflows, or shared drive permissions fail during recovery.

- Missing recovery order: If staff do not know which systems must come back first, a restored server can still leave the practice unable to check in patients or process claims.

- False confidence from green dashboards: A “successful backup” status does not confirm that data is usable, current, or recoverable within the practice’s required downtime window.

- Operational exposure in healthcare: Delayed chart access, intake bottlenecks, and billing disruption can create both patient service issues and compliance concerns.

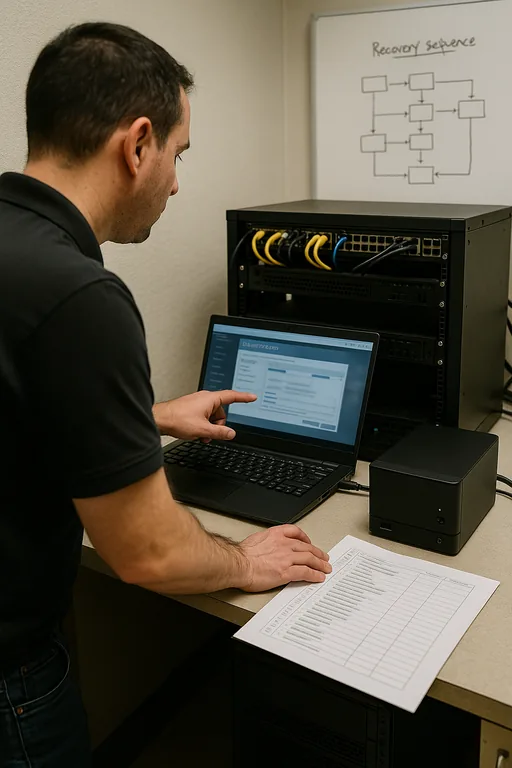

How Medical Practices Close the Recovery Gap

The fix is practical but disciplined. First, define what must be restored for the practice to function, not just what data exists. That usually includes the practice management platform, EHR database, domain services, file shares, scanning workflows, printers, VPN access, and any cloud sync or imaging connectors. Then test those restores on a schedule using the same conditions that would matter during a real outage. This is where compliance-focused IT management becomes important, because healthcare operations need documented recovery objectives, evidence of testing, and clear accountability for who validates each system.

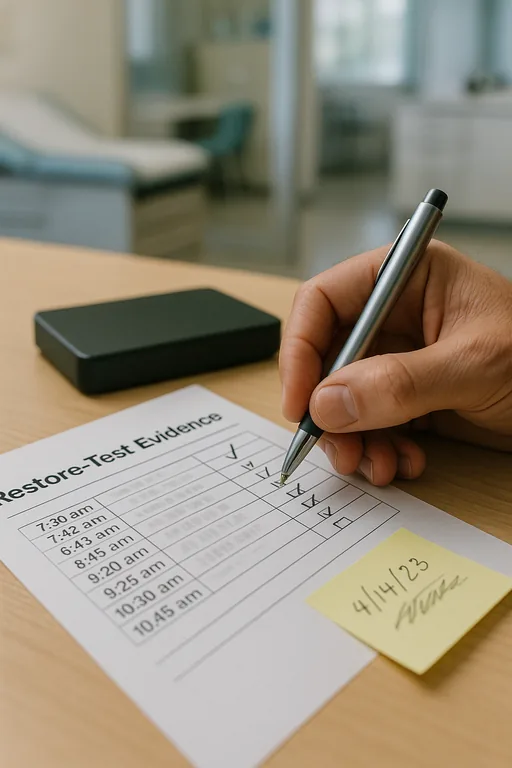

We also recommend separating backup success from business continuity success. A backup report should confirm completion, but a resilience test should confirm that the restored environment actually works, that staff can authenticate, and that the practice can process patients and billing again. For healthcare organizations, the CISA ransomware and recovery guidance is useful because it reinforces offline recovery planning, restore testing, and operational recovery steps rather than relying on assumptions.

- Restore validation: Run scheduled test restores for servers, databases, and file systems so the team can verify data integrity and application startup.

- Dependency mapping: Document which systems must recover first, including authentication, database services, and network shares.

- Recovery time targets: Set realistic RTO and RPO expectations for front desk, clinical, and billing workflows.

- Access hardening: Protect backup consoles and admin accounts with MFA, role separation, and alerting for failed jobs or unauthorized changes.

- Evidence for regulated operations: Maintain test records and recovery documentation that support regulatory compliance support for medical offices when auditors or insurers ask how continuity is validated.

Field Evidence: From Backup Status to Verified Recovery

We worked through a similar pattern with a multi-provider office corridor in the Reno-Sparks area where the environment looked stable until a storage fault forced an urgent restore. Before remediation, the practice had daily backups but no documented restore sequence, no recent test of the line-of-business database, and no confirmation that staff permissions would survive recovery. The result was a long morning of manual intake, delayed claims work, and uncertainty about whether the restored data set was complete.

After implementing quarterly restore drills, dependency checklists, and recovery validation for the application stack, the same type of disruption became manageable. Instead of improvising under pressure, the office had a known order of operations, a tested recovery target, and a clear communication path for front-desk staff. In Northern Nevada, where smaller practices often run lean teams and cannot absorb half a day of downtime easily, that operational clarity is what changes the outcome.

- Result: Recovery verification time dropped from several uncertain hours to a documented 70-minute restore workflow with confirmed access to scheduling, shared files, and billing functions.

Resilience Test Reference Points for Medical Practices

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in It Support And Help Desk and has spent his career building practical recovery, security, and operational continuity processes for businesses across Washoe County and Northern Nevada.

Local Support in Washoe County

From Reno Computer Services at 500 Ryland Street, we regularly support medical and professional offices across Reno, Sparks, and surrounding Washoe County corridors. For practices near Meadowood Mall and South Reno, response logistics are usually straightforward. The larger issue is making sure recovery planning is already tested before a lockout or server failure interrupts patient flow, billing, and staff access.

Backups Are Not the Same as Recovery

The main issue behind a medical practice lockout is often not the lockout itself. It is the absence of tested recovery. If the practice cannot prove that systems restore in the right order, with the right permissions and application dependencies, then a backup job report offers limited operational value. In healthcare environments, that gap affects scheduling, patient intake, billing, and continuity very quickly.

For Washoe County practices, the practical takeaway is simple: test restores on purpose, document the recovery sequence, and measure how long it actually takes to get staff working again. That is what turns backup from a checkbox into a continuity control.