Reno Lockout Med

Problems like this tend to stay hidden until something important breaks. For medical practices in South Meadows, that often means a lockout, avoidable delays, or a bigger recovery burden than expected. The best response is validating backups regularly and proving recovery before a real outage.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why a Backup Copy Is Not the Same as Real Resilience

A medical practice gets into trouble when leadership assumes that having backups means recovery is covered. It does not. A backup is only a stored copy of data. Resilience is the ability to restore systems, confirm data integrity, reconnect users, and keep operations moving under pressure. In South Meadows clinics, where schedules are tight and patient flow depends on immediate access to practice management and imaging systems, the gap between those two ideas becomes obvious the moment a lockout happens.

We typically find that the underlying failure is not the outage itself. The real failure is the missing test. Backups may be running every night, but no one has recently verified restore times, application consistency, credential dependencies, or whether the recovered server can actually support front-desk and clinical workflows. That is why businesses reviewing backup and disaster recovery in Reno should focus on proof of recovery, not just backup job success messages. In cases like Brianna’s, the lockout is only the symptom; the deeper issue is that the practice cannot confidently answer how long a restore will take or what will still be unavailable after the restore completes.

- Untested recovery paths: Backup software may report success while restored systems still fail because of broken permissions, incomplete databases, expired service accounts, or missing application dependencies.

- Workflow interruption: Medical front desks, billing teams, and providers lose time quickly when scheduling, eligibility checks, scanned records, or line-of-business applications are unavailable.

- False continuity assumptions: Many practices have data copies but no practical plan for how phones, workstations, internet access, and cloud applications will function during a server-side event.

- Local operational pressure: In Reno and South Meadows, multi-provider offices often cannot absorb even a half day of disruption without creating downstream scheduling and revenue issues.

How Medical Practices Close the Resilience Test Gap

The fix is operational discipline. Start by identifying the systems that matter most: EHR access, scheduling, billing, file shares, scanning, and any local line-of-business server that supports patient intake. Then test restores against those systems on a schedule, not just after a failure. A proper resilience test confirms whether the backup image mounts, whether the application starts cleanly, whether users can authenticate, and whether the recovered environment meets an acceptable recovery time objective.

We also recommend documenting ownership, escalation paths, and infrastructure dependencies so the practice is not improvising during an outage. That usually includes segmented backup storage, immutable or protected copies where appropriate, MFA hardening on backup administration, and regular validation through structured infrastructure management for medical operations . For healthcare organizations, the CISA ransomware and recovery guidance is a practical reference because it emphasizes tested recovery, not just prevention.

- Restore validation: Perform scheduled test restores of critical servers and confirm application-level functionality, not just file recovery.

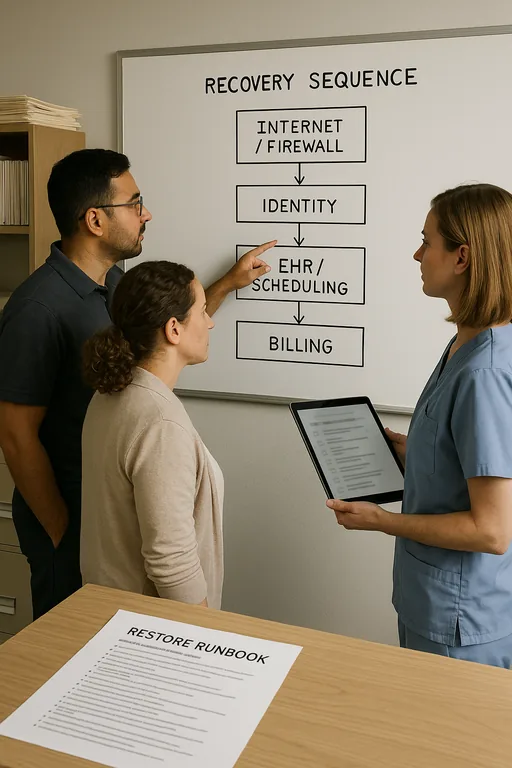

- Recovery runbooks: Maintain a written sequence for restoring internet, identity services, core applications, and user access in the right order.

- Backup isolation: Protect backup repositories with separate credentials, limited administrative access, and monitoring for failed or altered jobs.

- Continuity planning: Define how the practice will continue intake, scheduling, and billing if the primary server or tenant is unavailable for several hours.

Field Evidence: Restore Testing Changed the Outcome

We worked with a Northern Nevada healthcare office operating between Reno and Sparks that had nightly backups but no recent full recovery test. Before remediation, the team could confirm that backup jobs completed, yet they could not state how long it would take to restore the practice management server or whether attached scanning workflows would function afterward. During a connectivity and authentication issue, staff were forced into manual intake and delayed charge entry by most of a business day.

After implementing quarterly restore tests, dependency mapping, and executive review through IT consulting in Northern Nevada , the office had a documented recovery sequence and a verified restore window. When a later storage fault affected a production workload during a heavy clinic week, the team restored the affected system in a controlled manner and resumed normal operations without the same confusion. That kind of improvement matters in this region, where weather events, carrier issues, and older mixed-use buildings can complicate already stressed infrastructure.

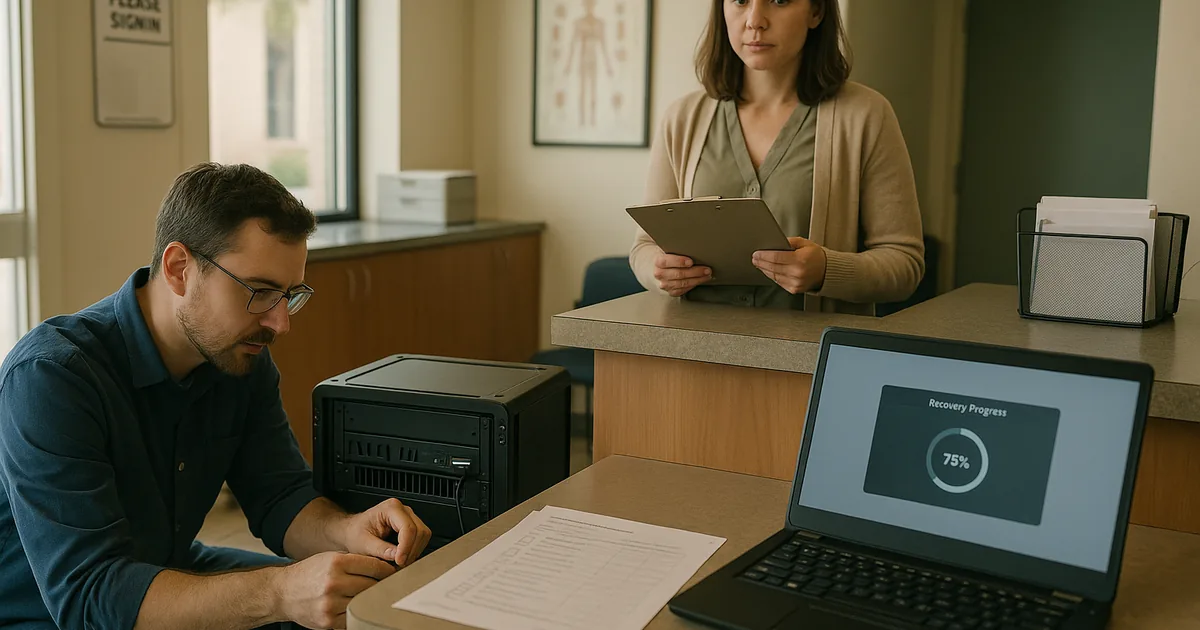

- Result: Verified recovery time dropped from an unknown duration to a tested 75-minute restore for the core application server, with same-day billing disruption reduced to under 1 hour.

Resilience Test Reference for Medical Practices

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Backup And Disaster Recovery and has spent his career building practical recovery, security, and operational continuity processes for businesses across South Meadows, Reno, Sparks, Carson City, and Northern Nevada and Northern Nevada.

Local Support in South Meadows, Reno, Sparks, Carson City, and Northern Nevada

Medical practices in South Meadows often need fast, practical support when a lockout or failed restore interrupts patient flow. Our Reno office is positioned to support clinics across the Truckee Meadows, including locations near South Rock Boulevard, with a typical travel time of about 13 minutes to this corridor. That local proximity helps, but the larger value is having tested recovery procedures in place before an outage forces the issue.

The Real Issue Is Recovery Confidence, Not Just Backup Presence

For medical practices in South Meadows, a lockout usually exposes a larger resilience problem: the organization has copies of data but has not proven that systems can be restored in a way that supports real patient operations. That distinction matters because scheduling, chart access, billing, and intake all depend on more than a successful overnight backup job.

The practical takeaway is straightforward. Test restores on a schedule, verify application functionality, document recovery order, and assign accountability before an outage occurs. When those controls are in place, downtime becomes shorter, decisions become clearer, and the business is not forced to discover its weaknesses during a live event.