Reno Lockout

Seeing a lockout is often the visible symptom of growth outpacing IT capacity, not the root problem itself. In medical practices across Reno, issues like endpoint sprawl, underplanned infrastructure, and inconsistent standards can quietly undermine managed backup solutions until work stops or risk spikes. The fix usually starts with standardizing how new users, devices, and systems are brought online.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Lockouts Happen When a Practice Hits the Scalability Ceiling

A lockout in a growing Reno medical practice is rarely just a password problem. More often, it is the moment when accumulated technical debt becomes visible. New providers are added, front-desk staff expands, more exam rooms get devices, and line-of-business applications multiply. If identity controls, endpoint standards, storage growth, and backup scope are not reviewed at the same pace, the practice eventually reaches a scalability ceiling where normal changes start causing access failures, sync conflicts, and unstable recovery points.

We see this often in healthcare offices that grew quickly along California Avenue, South Reno, and Sparks without revisiting the original design assumptions. A backup platform that worked well for 18 endpoints may become unreliable at 45 if retention, bandwidth, agent health, and user provisioning are inconsistent. That is why managed backup solutions in Reno need to be tied to growth planning, not treated as a static tool. In incidents like the one Anita faced, the visible lockout is usually the final symptom of several quieter failures already in motion.

- Endpoint sprawl: Laptops, exam-room workstations, personal mobile access, and replacement devices are added faster than they are documented, patched, and enrolled into backup and security policy.

- Underplanned infrastructure: Storage quotas, authentication dependencies, and network capacity are left at small-office settings even after the practice adds providers, imaging workflows, or remote access needs.

- Inconsistent onboarding: New users receive access in different ways, with different permissions and device states, which creates gaps in backup coverage and raises the chance of account or profile conflicts.

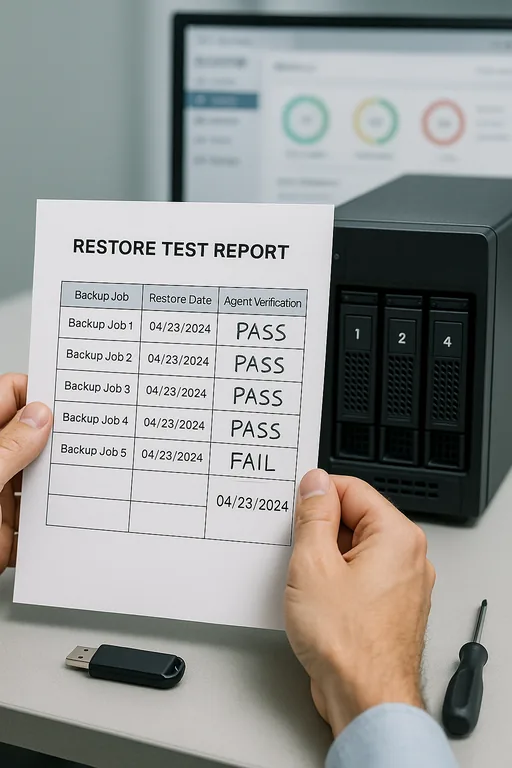

- Operational blind spots: Teams assume backups are healthy because jobs exist, but they are not validating restore readiness, failed agents, or whether newly added systems are actually protected.

How to Stabilize Backup, Access, and Growth Before the Next Hiring Wave

The practical fix is to standardize growth. That means every new employee, workstation, laptop, scanner-connected PC, and cloud application follows the same intake process. We typically start by inventorying all endpoints, mapping which systems hold protected health information, confirming which devices are in backup scope, and identifying where authentication or storage dependencies can create a single point of failure. From there, the environment is rebuilt around repeatable standards instead of exceptions.

For medical practices, this usually includes policy-based enrollment, role-based access, backup validation, and tighter device governance through endpoint management for growing Reno practices . It also helps to align controls with practical guidance from CISA , especially around recovery readiness, account protection, and tested restoration procedures. If the practice is adding providers or opening another location, the backup design should be reviewed before the next 10 hires, not after the first outage.

- Standardized onboarding: Every new user and device is provisioned from a checklist that includes MFA, backup enrollment, naming standards, patch status, and access review.

- Backup validation: Run scheduled restore tests, verify agent health, and confirm that retention policies match actual clinical and billing recovery needs.

- Identity hardening: Reduce lockout risk with role-based groups, conditional access, and removal of stale accounts tied to former staff or retired devices.

- Capacity planning: Review storage growth, bandwidth usage, and backup windows before expansion pushes jobs outside acceptable recovery objectives.

- Alerting improvements: Escalate failed jobs, offline endpoints, and authentication anomalies early so the practice can correct drift before operations stop.

Field Evidence: Growth Control Restored Before the Next Expansion Phase

In one Northern Nevada healthcare environment, the before-state looked familiar: multiple generations of workstations, uneven backup agent deployment, and new hires being added by whoever was available rather than through a controlled process. The office had recurring morning slowdowns, occasional profile issues, and no confidence that every billing and scheduling endpoint could be restored cleanly. The location also dealt with the normal Reno challenge of coordinating cloud access, local line-of-business software, and variable connectivity across older office construction.

After standardizing device enrollment, cleaning up identity groups, validating restore points, and adding clearer escalation workflows, the practice moved into a more stable operating state. The same framework also supported IT systems for multi-location operations as leadership prepared for additional staff and service growth without repeating the same lockout pattern.

- Result: Backup coverage verification increased to 100 percent of in-scope endpoints, failed-job noise dropped substantially, and user onboarding time was reduced from several hours of ad hoc setup to a repeatable same-day process.

Backup Scalability Risk Reference for Medical Practices

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Managed Backup Solutions and has spent his career building practical recovery, security, and operational continuity processes for businesses across Reno, Sparks, Carson City, Lake Tahoe, and Northern Nevada and Northern Nevada.

Local Support in Reno

From our office on Ryland Street, we regularly support medical and professional offices across Reno where growth creates pressure on access control, backup coverage, and day-to-day reliability. The California Avenue corridor is a short drive from our location, which makes it practical to assess how staffing growth, device additions, and infrastructure limits are affecting operations before a lockout becomes a larger interruption.

The Operational Takeaway

When a medical practice gets locked out during a growth phase, the real issue is usually not a single failed login or isolated backup alert. It is the accumulated effect of adding people, devices, and systems faster than the environment was designed to support. In Reno practices, that often shows up first as unstable access, incomplete backup coverage, or recovery uncertainty.

The practical response is to treat growth as an IT event. Standardize onboarding, validate backups against actual restore needs, and review infrastructure before expansion pushes the environment past its safe operating limit. That approach reduces downtime, protects billing continuity, and gives leadership a clearer path to scale without repeated disruption.