Reno Secure Plant Ops

This kind of issue rarely appears all at once. For manufacturing plants in Northern Nevada, it usually builds through slow devices, ticket backlogs, and repeated workarounds and then surfaces as encrypted files, slower recovery, or higher exposure. A more reliable setup starts with stabilizing daily support, reducing repeat issues, and standardizing how IT is handled.

This case study reflects real breakdown patterns documented across 300+ regional IT incidents. Names and identifying details have been modified for confidentiality, while technical and financial data remain accurate to the original events.

Why Encrypted Files Often Start as an Operational Drain

When a manufacturing plant in Northern Nevada ends up with encrypted files, the immediate assumption is usually that the event began with one malicious email or one exposed machine. In practice, we usually find a longer chain of operational drag behind it. Slow workstations, unresolved support tickets, aging line-of-business systems, and repeated user workarounds create the conditions where security controls become inconsistent and response times stretch out. That is the operational drain gap: small daily friction that quietly weakens recovery readiness until a larger event exposes it.

In Reno, Sparks, Carson City, and out toward industrial corridors serving regional distribution and fabrication, plants often run mixed environments with office systems, production endpoints, shared folders, and vendor remote access all tied together. If patching slips, local admin rights remain too broad, and backup checks are treated as a monthly checkbox, encrypted files become much more likely to spread before anyone notices. Businesses trying to reduce that exposure usually benefit from structured risk assessments and security readiness in Northern Nevada that identify where daily support issues are creating larger security gaps. The same pattern we saw in Melody’s incident applies in manufacturing: the visible encryption event is often the final symptom, not the first failure.

- Ticket backlog: Repeated unresolved issues such as slow logins, failed updates, and unstable shared drives train staff to work around problems instead of reporting them early.

- Flat network design: When office devices, production support systems, and file shares are not properly segmented, one compromised endpoint can reach far more data than it should.

- Weak restore discipline: Backups may exist, but if no one validates file-level recovery, plants discover gaps only after encryption has already interrupted operations.

- Tool sprawl: Multiple unmanaged remote tools, legacy antivirus, and inconsistent alerting create blind spots that delay containment.

Practical Remediation for Manufacturing Environments

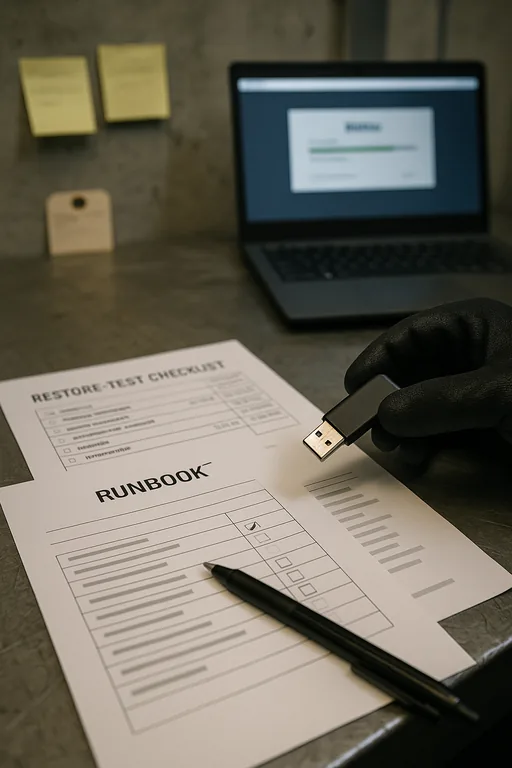

The fix is not a single product. It is an operational reset. We start by isolating affected systems, preserving logs, confirming the scope of encryption, and identifying whether the event touched only file shares or also impacted local endpoints, mapped drives, and backup repositories. From there, the priority is restoring business function in the right order: production support systems, scheduling, shared documentation, finance, and then lower-priority data. Plants that need faster recovery and less uncertainty typically require tested backup and disaster recovery planning rather than assuming backups alone are enough.

Once immediate recovery is underway, the environment needs hardening. That usually means MFA enforcement for remote access, EDR with centralized alerting, removal of unnecessary admin rights, segmentation between plant-floor support systems and office networks, and documented restore testing. For a practical baseline on ransomware resilience and recovery planning, the guidance from CISA’s ransomware guide is useful because it aligns technical controls with operational response steps.

- Containment first: Disable affected accounts, isolate infected endpoints, and block lateral movement before beginning broad recovery work.

- Restore validation: Use tested recovery points and confirm file integrity before reconnecting restored shares to production users.

- Segmentation: Separate production support devices, office endpoints, and backup infrastructure with VLANs and access controls.

- Alerting improvements: Route endpoint, backup, and authentication alerts into one monitored workflow so repeated low-grade issues are not ignored.

Field Evidence: From Repeated Friction to Controlled Recovery

We have seen this pattern in Northern Nevada industrial settings where a plant near the I-80 corridor was dealing with recurring workstation slowdowns, intermittent file lock errors, and a growing list of “temporary” exceptions for vendor access. Before remediation, staff were losing time every week to reconnect drives, reopen corrupted spreadsheets, and manually recreate production support documents. After standardizing endpoint controls, tightening remote access, and moving the company to managed backup solutions for business continuity , the environment became much easier to operate and recover.

- Result: Restore testing dropped file recovery time from most of a workday to under 90 minutes, reduced repeat support tickets by 38 percent over the next quarter, and gave plant leadership a documented recovery sequence instead of ad hoc decisions during downtime.

Operational Controls That Reduce Encryption Risk

Scott Morris is an experienced IT and cybersecurity professional with 16 years of hands-on experience in managed technology services. He specializes in Risk Assessments And Security Readiness and has spent his career building practical recovery, security, and operational continuity processes for businesses across Northern Nevada and Northern Nevada.

Local Support in Northern Nevada

Manufacturing and operational support issues in this region often involve more than one site, mixed internet carriers, older buildings, and the practical delay of moving people between Reno, Sparks, and South Meadows. That is why response planning has to account for both remote containment and local access. From our Reno office, the route to Renown South Meadows Medical Center is typically about 15 minutes, which is close enough for on-site coordination when needed but still reinforces why stable remote support, documented recovery steps, and tested backups matter before an outage starts.

Stabilize Daily IT Before It Becomes a Recovery Event

Encrypted files in a manufacturing environment are rarely just a security story. They are usually the result of unresolved operational friction that has been tolerated for too long. Slow endpoints, recurring support issues, weak backup validation, and inconsistent access controls all increase the chance that a routine problem turns into downtime, delayed production support, and expensive recovery work.

The practical takeaway is straightforward: reduce the daily drain, standardize support, and test recovery before an incident forces the issue. In Northern Nevada, where many businesses operate with lean teams and mixed systems, disciplined IT operations are what keep a file event from becoming a plant-wide interruption.