Technology Advisory & Assessment

Technology advisory and assessment gives leadership a structured view of systems, risks, dependencies, and weak controls so IT decisions are based on evidence, not assumptions. Done well, it improves planning, reduces avoidable downtime, and exposes hidden operational fragility.

After adding a second office, Alan T. kept an inherited firewall rule and a shared admin account because no formal technology assessment had been done. A spoofed vendor payment request slipped through the exposed workflow during month-end, approvals stalled for two days, and the direct loss and cleanup cost reached $57,500.

This story is based on a real business technology support pattern: the kind of operational breakdown that often becomes visible only after systems, staff, vendors, and workflows are already under strain. Names, dates, timelines, and identifying details have been anonymized or modified for confidentiality, while preserving the practical lessons behind the scenario.

Scott Morris is a managed IT and cybersecurity professional who helps businesses manage infrastructure, reduce security exposure, maintain stable operations, and prepare for recovery when incidents occur. Scott Morris has 16+ years of managed IT and cybersecurity experience. That background is directly relevant to Technology Advisory & Assessment because reliable reviews come from seeing how real environments fail: undocumented systems, access sprawl, weak patch discipline, incomplete monitoring, and recovery plans that look acceptable until an outage proves otherwise. His work with Reno and Sparks business technology environments is grounded in practical risk reduction, business continuity, secure infrastructure management, recovery readiness, and operational resilience.

This article explains common assessment methods, evidence, and failure patterns that business leaders can use when reviewing internal teams or outside providers. This is general technical information; specific network environments and compliance obligations change strategy.

Technology advisory and assessment is not just an audit, a hardware quote, or a one-time security scan. It is a structured review of how the business uses technology, where operational dependencies exist, which risks are tolerated by accident, and what changes are actually justified by uptime, security, continuity, and cost. Effective work in this area usually aligns with broader IT consulting and vCIO planning because cloud changes, vendor transitions, and system upgrades tend to fail when decisions are made without an accurate view of the current environment.

The operational baseline should include asset inventory, supported software versions, identity stores, privileged accounts, internet circuits, wireless and VPN configuration, email security posture, endpoint protection, patching, vendor dependencies, and documentation ownership. That is why many leadership teams fold assessment work into strategic IT leadership rather than treating it as a one-off project; regulated offices, including organizations reviewing HIPAA obligations, usually need evidence that safeguards are not only selected but maintained.

A common failure point is assuming a tool equals a control. In practice, the issue is rarely the tool alone; it is the process around it. A firewall with temporary rules nobody removed, multifactor authentication enabled for employees but not for privileged service accounts, or endpoint security deployed without alert ownership can leave an environment looking managed on paper while remaining fragile in day-to-day operations.

What does Technology Advisory & Assessment actually cover?

It covers the business use of technology as much as the technology itself. A competent assessment reviews infrastructure, cloud services, user identities, administrative access, vendor relationships, lifecycle status, backup and recovery readiness, support processes, and documentation quality. It should also connect those findings to business outcomes such as downtime tolerance, revenue interruption, data exposure, and the practical impact of losing a key system during payroll, month-end, or client delivery. What usually separates a stable environment from a fragile one is not a longer tool list; it is whether leadership understands dependencies, ownership, and acceptable risk well enough to make decisions before an incident forces the issue.

Why does it matter before a failure instead of after one?

Most disruptions are not caused by one dramatic event. They grow from ordinary changes that were never fully reviewed: a new SaaS platform, a remote access exception, an inherited vendor account, or a server that stayed in production because replacing it kept getting deferred. Advisory work matters before failure because it exposes these weak points while the business still controls the timing and cost of remediation. For Nevada organizations handling personal information, Nevada Revised Statutes NRS 603A requires reasonable security measures. In practice, that means leadership should be able to explain who can access sensitive data, how systems are maintained, and how incidents are handled. Without that visibility, a manageable issue can become operational downtime, breach response expense, and legal exposure at the same time.

Which risks does a competent assessment usually uncover?

- Unsupported systems: Aging servers, line-of-business applications tied to old operating systems, or neglected network appliances create both outage risk and security exposure because replacement planning was postponed.

- Identity sprawl: Former staff accounts, shared administrator credentials, and old vendor logins often remain active long after their original purpose ends, increasing fraud and unauthorized access risk.

- Single points of failure: One internet circuit, one firewall, one domain controller, or one employee who knows the recovery sequence can stop operations when that dependency breaks.

- Weak documentation: During incident response, it is common to discover that critical IP ranges, licensing portals, warranty contacts, or recovery instructions exist only in someone’s inbox or memory.

- Mismatched recovery expectations: Leadership may believe systems can be restored in hours when the actual recovery sequence, bandwidth, and application dependencies would take days.

How does the assessment process work in practice?

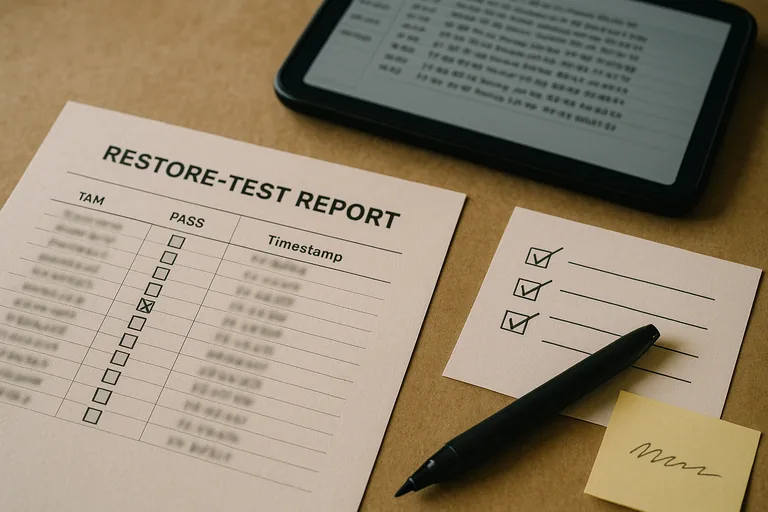

A competent process starts with business interviews, then moves into technical discovery and validation. Experienced IT teams review inventories, map business-critical applications, check administrative group membership, sample firewall and remote access rules, inspect patch compliance, confirm endpoint protection policy coverage, and compare backup reports against actual recovery requirements. One of the first things experienced IT teams check is whether documented ownership matches reality. During a routine review, repeated 2:13 a.m. authentication failures on a print server looked minor until log correlation showed a retired service account embedded in an accounts payable scan workflow. The signal was failed logons; the underlying issue was an undocumented dependency and no access lifecycle review. Real evidence from a mature assessment includes an asset inventory, access review records, configuration exports, patch compliance reports, exception lists, and written remediation priorities tied to business impact.

How can a business tell whether the assessment is thorough?

When does weak implementation become dangerous?

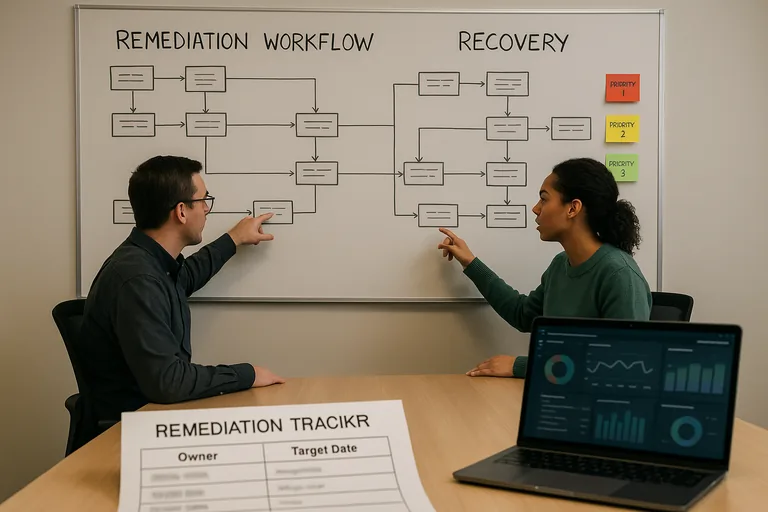

This tends to break down when assessment work becomes a checkbox exercise instead of an operational discipline. A common failure point is a security stack that exists but is not fully enforced: alerts generate without documented escalation, offboarding requests are handled informally without access review logs, or remote access policies differ between sites because old exceptions were never reconciled. In weak environments, controls often exist on paper but not in active verification. That is where hidden fragility becomes dangerous. If nobody reviews alert queues, confirms policy enforcement, audits privileged access, or checks whether exceptions are still justified, the environment drifts until a routine incident exposes the gap. Mature teams make that drift visible through recurring review cadence, change records, alert investigation timelines, and documented exception handling.